The Simple Rule that Separates AI Winners from Everyone Else

President, Zaruko

Table of Contents

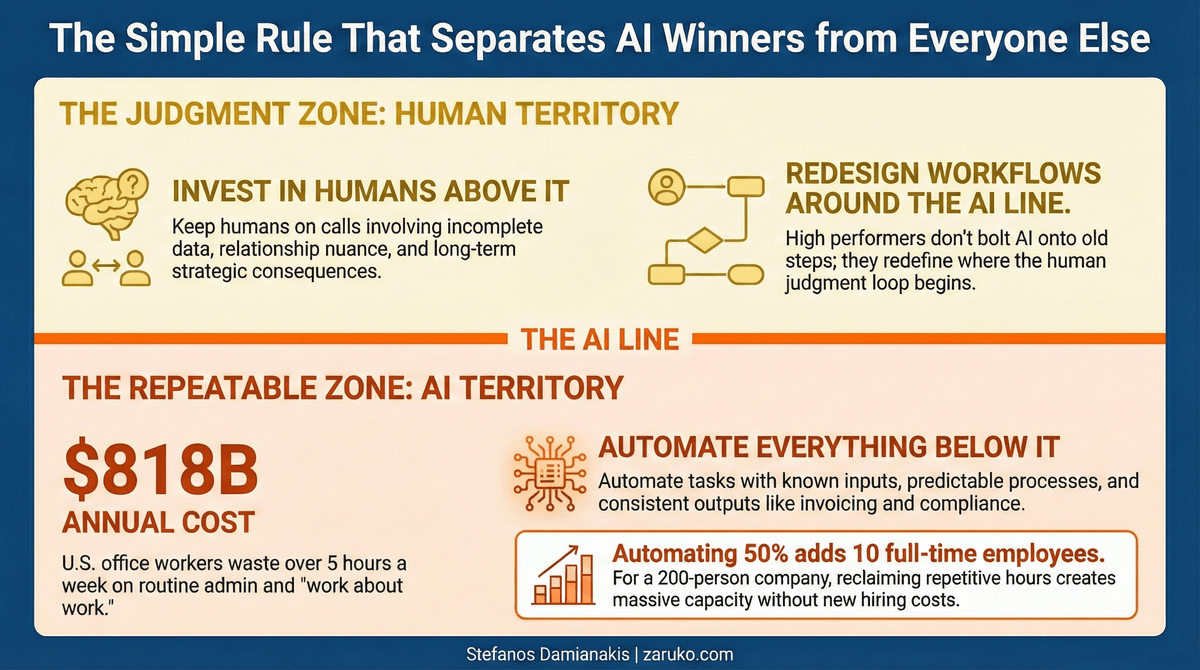

Automate the repeatable. Keep humans on the judgment calls.

One rule separates companies getting ROI from AI and companies burning money on it. Not a 47-slide framework. Not a maturity model.

Automate the repeatable. Keep humans on the judgment calls.

The companies winning with AI right now aren't running the most sophisticated models. They're the ones that drew a clean boundary between what machines should do and what people should do, and they have the discipline to hold that line.

The Problem: Companies Get This Backwards

I keep seeing the same pattern. A company gets excited about AI. They hire consultants or buy a platform. And then they point it at the wrong problems.

They try to automate hiring decisions while their accounts payable team still manually keys invoices. They build AI-powered pricing models while their schedulers toggle between three spreadsheets to assign shifts. They invest in "predictive analytics for strategic planning" while their customer service team copies and pastes the same responses 200 times a day.

This is backwards. And it's expensive.

In other words, companies point AI at uncertainty and leave certainty untouched.

What Makes a Task Repeatable?

A repeatable task has three characteristics:

Known inputs. You know what's coming in. An invoice has a vendor, an amount, a date. A support ticket has a customer, a product, a problem category. A payroll run has hours, rates, and tax tables.

Predictable process. The steps don't change much. Match the invoice to the PO. Route the ticket to the right team. Calculate gross pay, deductions, net pay. There's a correct way to do it, and that correct way stays consistent.

Consistent output. The result looks the same every time. A processed invoice, a routed ticket, a paycheck.

AI handles these well. Not because AI is smart. Because these tasks don't require smart. They require fast and consistent.

Here are some examples that belong on the "automate it" side of the line:

Finance & back office:

- Invoice processing and matching

- Customer payment matching and cash application

- Monthly financial close reconciliations

- Employee expense report validation against company policy

Commercial operations:

- Contract review for standard clause extraction

- Vendor onboarding document collection and verification

- Sales pipeline data hygiene (deduplication, field standardization)

Compliance & quality:

- Compliance monitoring against known regulatory rules

- Report generation from structured data

- Quality control on production lines (visual inspection)

These are tasks where the rules are known, the data is structured, and the acceptable output is well defined. A human doing these tasks adds little that a machine can't, and the machine does it faster with fewer errors.

What Makes Something a Judgment Call?

Judgment shows up when the inputs are incomplete, the process requires weighing competing priorities, and the consequences play out over months or years. In every case below, AI does the prep work. The human makes the call.

A lender reviewing a borderline loan application. AI can score the borrower, pull credit history, calculate debt-to-income, and flag risk factors. Everything comes back borderline. But the decision to approve because the borrower just landed a three-year government contract that hasn't hit the financials yet? That's an underwriter who asked the right follow-up question and understood what the numbers couldn't yet show.

A CEO deciding whether to kill a product line. AI can show you declining revenue, rising support costs, and accelerating customer churn. The data says shut it down. But the decision to keep it because it's the entry point for your three largest enterprise accounts and losing it means losing them? That's someone who understands relationship dynamics the data doesn't capture.

A PE firm evaluating an acquisition target. AI can pull the financials, benchmark margins against comps, flag anomalies in revenue concentration, and score the target on 50 variables. The model says the target is fairly priced. But the decision to pay a 30% premium because the target's engineering team is the real asset and will accelerate your platform strategy? That's a human reading the room during management meetings, weighing cultural fit, and making a bet on people.

In each case, the data collection and analysis can and should be automated. The decision cannot. These decisions depend on context that lives in people's heads. They require weighing tradeoffs that don't reduce to a formula. And when they go wrong, someone needs to be accountable. "The algorithm decided" is not an answer your board, your customers, or your LPs will accept.

The Data Behind the Line

This isn't just theory. The numbers tell a clear story.

Asana's Anatomy of Work research, based on surveys of over 10,000 knowledge workers globally, found that people spend 60% of their workday on "work about work."1 That includes chasing status updates, sitting in unnecessary meetings, duplicating tasks someone else already completed, and processing routine administrative work. Only 27% of their time goes to the skilled work they were hired to do.2

A 2025 survey of 3,000 U.S. office workers by Fyxer AI found that workers waste over five and a half hours per week on repetitive administrative tasks that could be automated. The study estimated this costs U.S. businesses $818 billion annually in lost productivity.3 The top offenders? Drafting emails (34% of workers cited this), reading emails (31%), updating spreadsheets (19%), and preparing reports (14%).

For a mid-market company with 200 employees, even using conservative estimates, that's well over 20,000 hours a year on tasks a machine could handle. Automate half of that and you've freed up the equivalent of 5 to 10 full-time employees. No headcount increase. No recruiting costs. No benefits expense. Just capacity that already exists in your organization, locked up in repetitive work.

Meanwhile, McKinsey's 2025 State of AI report found that only about one-third of organizations have actually scaled AI beyond the pilot stage. The companies that break through share a common trait: they redesign workflows around AI rather than layering it onto existing processes.4 And critically, high performers are more likely to have defined processes for determining when model outputs need human validation. In other words, they know where the line is.

The Line in Practice

Here's what it looks like when a company gets this right.

Before: A 150-person professional services firm has three full-time people processing invoices. Each invoice takes 8-12 minutes to match, code, and enter. Error rate runs about 4%. Month-end close takes 5 days.

After: AI handles invoice capture, matching, and coding. A human reviews exceptions and approves batches. Processing time drops to under 2 minutes per invoice. Error rate drops below 1%. Month-end close takes 2 days. Those three people now spend their time on cash flow analysis and vendor negotiations, work that actually requires thinking.

That's the line drawn correctly. The repeatable part (capture, match, code) is automated. The judgment part (exception handling, vendor relationships, cash management strategy) stays with humans.

This is what redesigning the workflow around AI looks like.

Now here's what it looks like when a company gets it wrong.

The mistake: Same firm decides to use AI to evaluate which clients to pursue. The model scores prospects based on historical win rates, deal size, and industry. It recommends dropping a low-scoring prospect.

What the model missed: The prospect is the new CFO at a company about to go through a PE-backed acquisition. The partner leading the relationship knows this because she had dinner with the CFO last week. The deal, if won, would open three more engagements across the portfolio.

The model did its job. It scored what it could see. But the decision required context the model couldn't access: a relationship, a market signal, and a strategic opportunity that hadn't entered any system yet. That's a judgment call. The model can inform it, but the human makes it.

Why Discipline Matters More Than Technology

The temptation is always to push AI further up the chain. The technology keeps getting better. The vendors keep promising more. And every conference keynote shows a demo that makes it look like AI can do everything.

It can't. Not yet. Maybe not ever for certain types of decisions.

The most important thing isn't what your AI can do. It's knowing where to stop.

This requires discipline. It means saying no to the vendor who promises their model can "optimize your strategic decision-making." It means telling your board that AI will handle the repeatable 60% and make your people more effective on the other 40%, not that it will replace the people making the hard calls.

It also means being honest about what "judgment" actually means in your organization. Some tasks that feel like judgment calls are actually repeatable processes wrapped in ego. If your team spends hours every month manually categorizing expenses because "only a human can understand the nuance," you might want to test that assumption.

Draw the line. Be honest about where it sits. Automate below it. Invest in your people above it.

That's the whole strategy.

Sources

- Asana, "How Work About Work Gets in the Way of Real Work." Anatomy of Work Index, survey of 10,000+ knowledge workers across 7 countries. asana.com ↑

- Asana, "The Way We Work Isn't Working." Anatomy of Work Index. asana.com ↑

- Fyxer AI, "Office workers wasting hours on repetitive admin tasks." February 2025. Survey of 3,000 U.S. adults and office workers. themirror.com ↑

- McKinsey & Company, "The State of AI in 2025: Agents, Innovation, and Transformation." Global survey of 1,491 participants. mckinsey.com ↑

Continue Reading

First Principles of AI

Ten foundational principles for evaluating AI claims and making better decisions.

Demystifying Agentic AI

What agentic AI actually is, what it isn't, and why most projects fail.

What 130 Real Vendors Reveal About Where AI Actually Works

What the 130 real agentic AI vendors tell us about where the technology works.

Ready to draw the line in your organization?

I help mid-market companies separate the automatable from the judgment calls, and build AI that shows up in EBITDA. Let's talk about where your line is.

Let's Talk