Table of Contents

Every vendor in your inbox has it. None of them agree on what it means. And that confusion is driving real spending decisions.

Earlier this week I wrote about how nobody agrees on what "agentic AI" actually means. Every software vendor, consulting firm, and tech publication has a different definition. The confusion isn't academic. Companies are committing budgets based on claims they can't verify.

This post is the practical follow-up. I'm going to explain what agentic AI is, what it isn't, and why you should be excited about it and skeptical of it at the same time.

Full disclosure: I've worked with AI and machine learning for over 20 years, well before it became a buzzword. I love the technology. I also know what it can't do. Both of those things are true at the same time.

Start with what you already know

A traditional program follows rules that someone wrote. If this, then that. The person who built it anticipated every scenario and coded a response for each one. The program does exactly what it was told, nothing more, nothing less.

A chatbot (what most people interact with today) takes that a step further. You ask a question. It generates an answer. One turn. You stay in control of what happens next.

An AI agent is different. You give it a goal, not a single question. It figures out the steps. It takes actions. It handles problems along the way. And it delivers a result.

Here's a concrete example.

Chatbot: You ask it to draft an email to a client. It drafts the email. You review it. You send it.

AI Agent: You tell it to schedule a meeting with three people next week. It checks calendars, proposes times, sends invitations, handles the back-and-forth when someone can't make it, and confirms when done. You get a calendar invite. The work happened without you managing each step.

One takes a single step. The other completes a workflow.

What makes something an AI agent

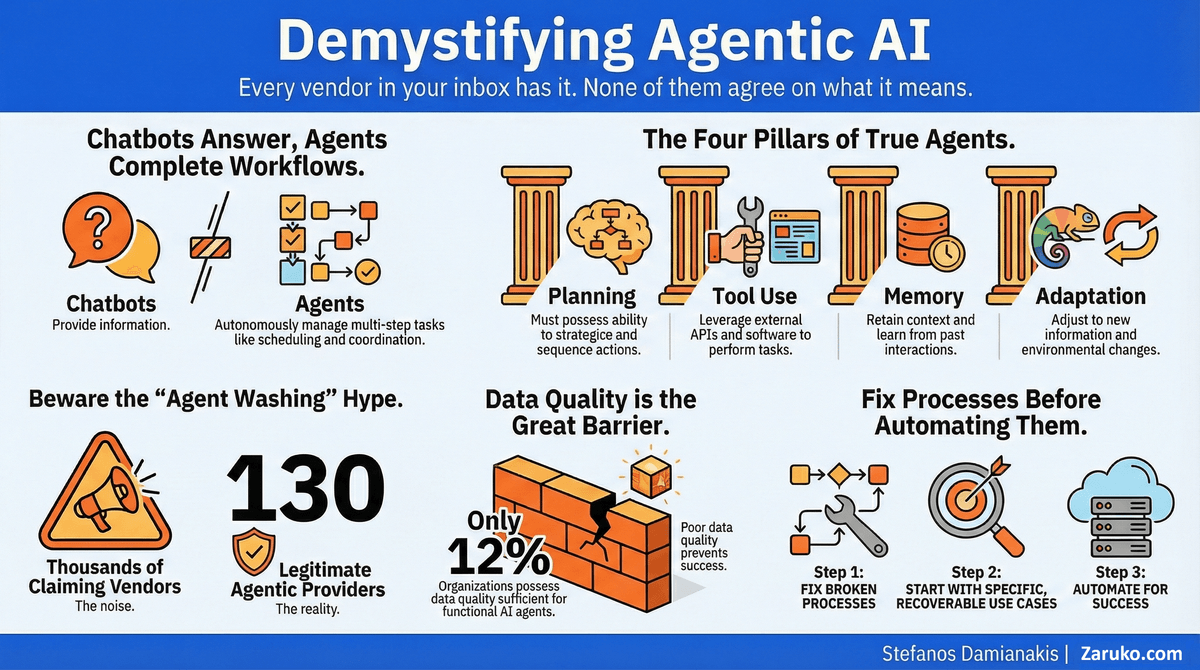

Four capabilities separate an agent from a chatbot:

Planning. It breaks a goal into steps. Not just "answer this question" but "here are the six things I need to do to get you an answer."

Tool use. It can take actions in the real world: send emails, query databases, update systems, search the web. A basic chatbot just talks. A true agent can independently take action.

Memory. It tracks progress across steps. It knows what it already tried, what worked, and what didn't. This can be short-term workflow state (remembering where it is in a multi-step task) or longer-term persistent memory, depending on the system. Most chatbots don't maintain this kind of working context across steps.

Adaptation. When something goes wrong, it adjusts. If the first approach fails, it tries another one. A program just throws an error.

Not every system that calls itself an "agent" has all four. That's where the marketing games begin.

Now for the word "agentic"

This is where I get skeptical. "Agentic" has become the most overused word in enterprise software since "synergy." Every vendor who bolts an LLM onto their existing product calls it "agentic."

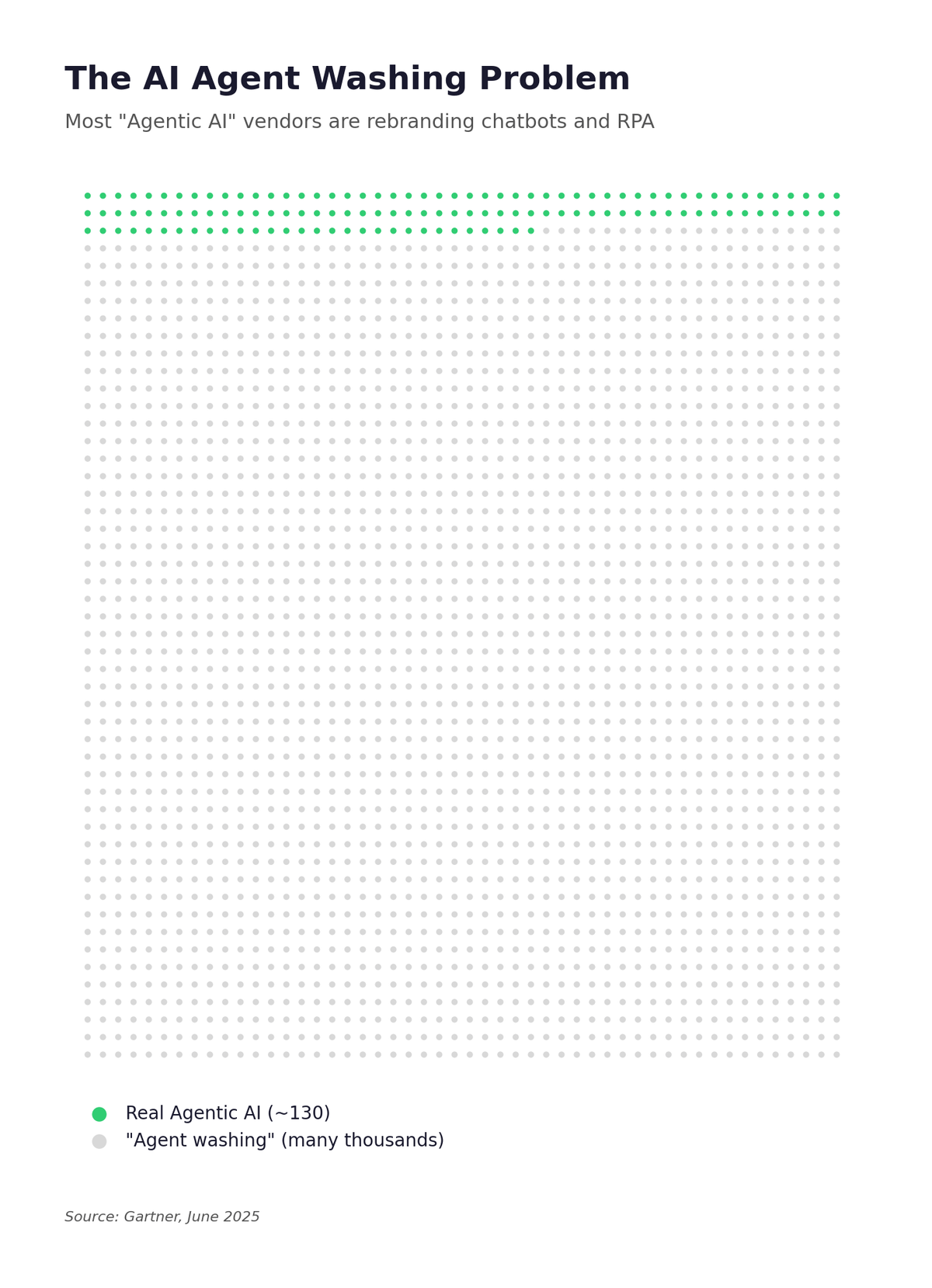

Gartner was blunt about this. Of the thousands of vendors claiming agentic AI capabilities, only about 130 are legitimate.1 The rest are doing what Gartner calls "agent washing," which is rebranding chatbots and RPA tools without adding real autonomy.

The Agent Washing Problem: only ~130 legitimate agentic providers out of ~2,500 claiming the label.

The useful meaning of "agentic" is simple: a system that has some agent-like qualities without being a full autonomous agent. Think of it as a spectrum. A chatbot that can look up your order status is more agentic than one that just answers FAQs. But it's not an agent. It's a program with an LLM handling some of the steps.

The problem is that the word has been stretched to mean everything, which means it now means nothing. When a vendor tells you their product is "agentic," the right response is: which of the four capabilities does it actually have?

The data tells a clear story: excitement is high, results are mixed

Here's where the rubber meets the road.

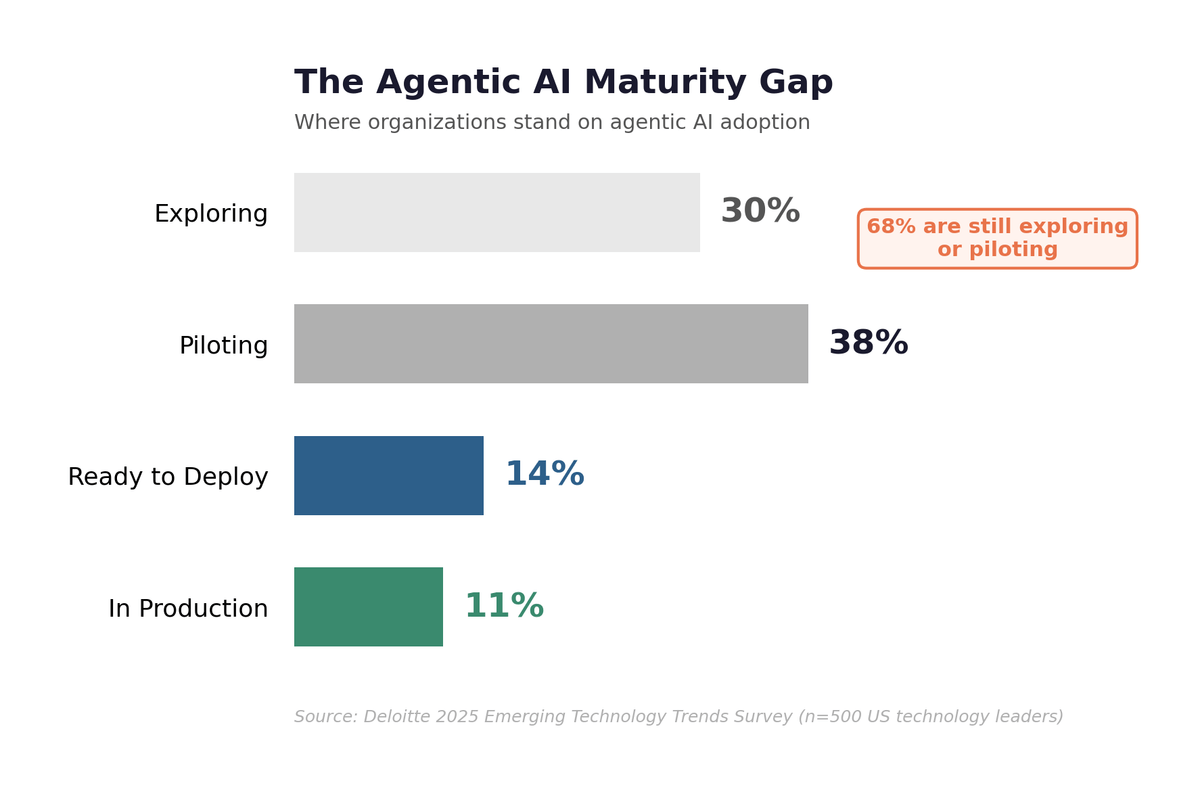

Deloitte's 2025 Emerging Technology Trends study surveyed organizations on their agentic AI maturity. The findings are worth sitting with:2

- 30% are exploring agentic options

- 38% are piloting solutions

- 14% have solutions ready to deploy

- 11% are actively using agents in production

Read that again. 68% of companies are either exploring or piloting. Only 11% have agents running in production. That's a lot of experiments and not many results. That gap between "piloting" and "production" is where most AI budgets quietly go to die.

The Agentic AI Maturity Gap: 68% of organizations are still exploring or piloting.

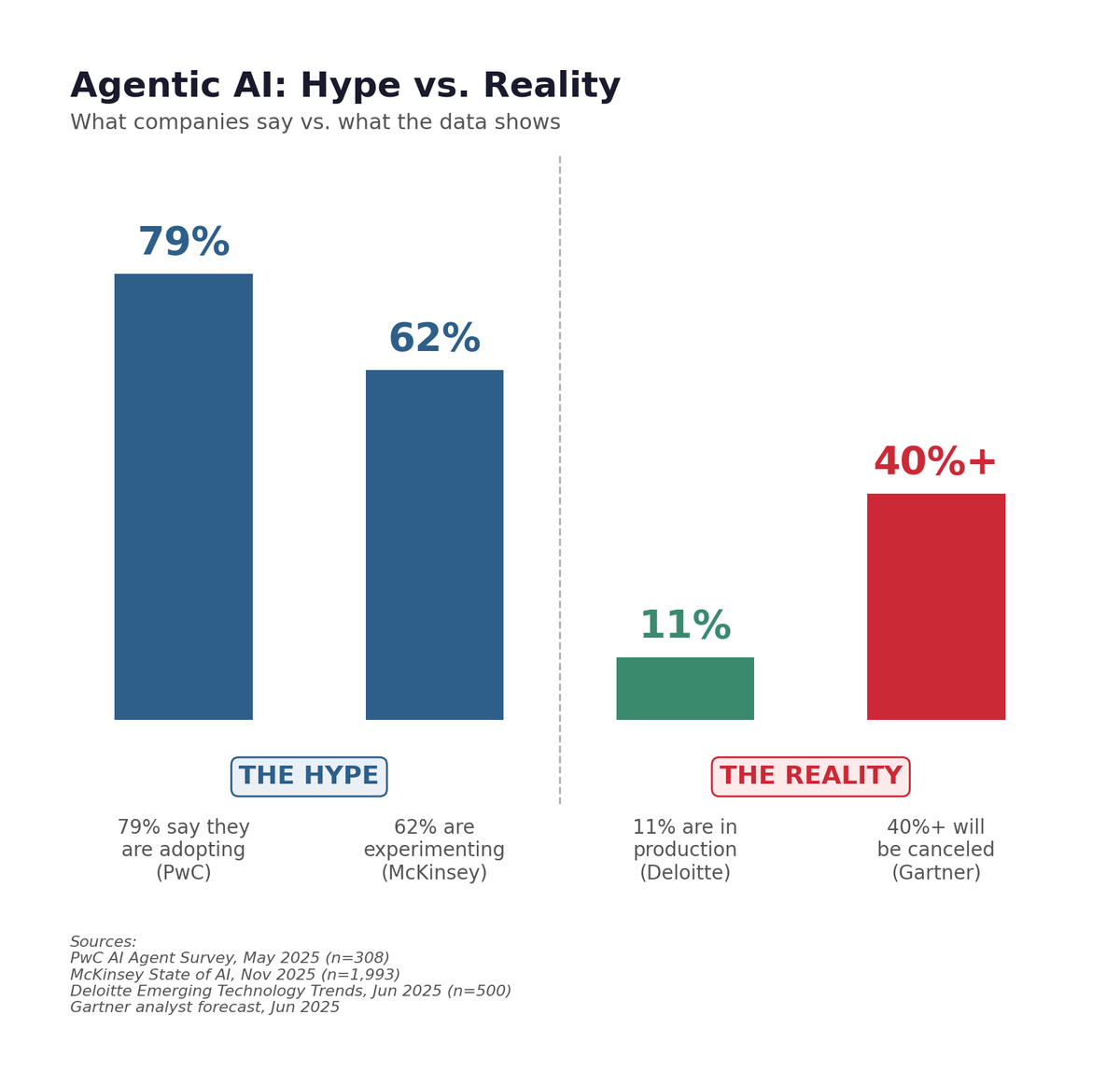

McKinsey's 2025 global AI survey found a similar pattern. 62% of organizations are at least experimenting with AI agents, but two-thirds have not begun scaling AI across the enterprise. Only 39% report any impact on EBIT at the enterprise level.3

And Gartner's prediction is the sharpest: over 40% of agentic AI projects will be canceled by the end of 2027, due to escalating costs, unclear business value, or inadequate risk controls.1

The pattern is clear. Companies are rushing to experiment. Most are not getting to scaled, production-level results. And a large share will eventually walk away.

Agentic AI: Hype vs. Reality. What companies say vs. what the data shows.

Why so many projects fail

Three reasons keep coming up in the research.

Broken workflows. Companies are asking AI agents to automate processes that are already a mess. An agent that automates a bad process just produces bad results faster. Henry Ford made the point in 1922: "There is no progress in merely finding a better way to do a useless thing."6 A century later, the principle hasn't changed.

Data problems. A 2025 study by Precisely and Drexel University found that only 12% of organizations have data of sufficient quality and accessibility for AI.4 Nearly 70% ranked data governance as their top challenge. If your data is unreliable, an agent working with that data will be unreliable. Garbage in, garbage out. (If that sounds familiar, I'll be writing more about data readiness soon.)

Wrong tool for the job. Sometimes a simple rule-based automation is the right answer. Not every problem needs an AI agent. When the task is predictable, repeatable, and well-defined, traditional automation is cheaper, faster, and more reliable. Agents shine when the task requires judgment, adaptation, and handling unexpected situations.

What AI agents can't do

I love this technology. I've spent two decades working with it. And I'll tell you what it can't do.

AI agents can't replace human judgment on high-stakes decisions. They can prepare the analysis, surface the options, and flag the risks. The decision still needs a person.

They can't operate reliably without supervision. The human-in-the-loop model, where a person reviews and approves before critical actions are taken, remains the standard for good reason. A Zapier survey of 525 U.S. enterprise executives found that 38% use human-in-the-loop as their primary approach to agent management. Only 20% let agents operate with minimal oversight.5

They can't fix bad processes. If your workflow is broken, an agent will break it faster. Fix the process first, then automate it.

And they don't understand what they're doing. They process information, identify patterns, and execute steps. That's powerful, but it's not the same as understanding context, intent, or consequences. The distinction matters when you're deciding how much autonomy to give them.

What AI agents are actually good at

With all those caveats, agents are genuinely useful for specific types of work. The simple rule—automate the repeatable, keep humans on judgment calls—applies here:

High-volume, repeatable tasks that need some judgment. Invoice processing, document review, customer inquiry routing. Not fully predictable, but not high-stakes either.

Multi-step workflows where the steps are well-defined but tedious. Data collection across multiple sources, report assembly, scheduling coordination.

Monitoring and alerting. Watching for anomalies across large datasets and flagging issues for human review. Agents are tireless and consistent. Humans are not.

Tasks where speed matters more than perfection. First-draft content, initial code review, preliminary research. The output needs human review, but getting 80% of the way there in seconds instead of hours is valuable.

The common thread: agents work best when the cost of a mistake is low and the cost of human time is high.

One example that shows the pattern

Vercel, a $9.3 billion developer platform company, wanted to scale its sales development operation without scaling headcount. They didn't start with a grand AI transformation. They started by documenting what their best sales development rep actually did, step by step. Engineers shadowed the top performer for six weeks and mapped the workflow in detail.

Then they built an AI agent to replicate that specific, documented process. The result: a 10-person SDR team was reduced to one person plus AI agents. The agents handle inbound lead qualification, follow-ups, and routine outreach. The human handles exceptions and relationship building.

Vercel's COO put it simply: "If you can document a workflow, it's now pretty straightforward to have an agent do it."7

Two things make this case instructive. First, they fixed and documented the process before automating it. Second, they started with one specific workflow, not "transform sales." That's the pattern that works.

How to evaluate what vendors are selling you

When a vendor says "agentic AI," ask these questions:

- Which of the four capabilities (planning, tool use, memory, adaptation) does your product actually have?

- What happens when the agent makes a mistake? What's the fallback?

- Can you show me a production deployment with measurable results, not a demo?

- Does this need to be an AI agent, or would a simpler automation work?

That last question is the most important one. The best technology decision is sometimes the simplest one.

The pattern across all the research

Step back and look at what the data is telling us across every source.

High experimentation, low production deployment. Two-thirds of companies are piloting, but only one in ten has agents in production. High cancellation prediction. Gartner expects 40%+ of projects to be abandoned within two years. A massive vendor credibility gap. Only 130 of thousands of "agentic" vendors are legitimate. And a data readiness gap underneath it all. Just 12% of organizations have data ready for AI.

These aren't separate problems. They're connected. Companies are experimenting with technology they can't fully evaluate, from vendors they can't fully trust, on data that isn't ready, without clear metrics for success. That combination explains the 40% cancellation rate better than any single factor.

The bottom line

Agentic AI is real. The capabilities are genuinely new and genuinely useful. Companies that figure out where agents fit into their operations will get meaningful productivity gains.

But the hype is running ahead of the reality. Most companies are still experimenting. Over 40% of projects will fail. And the word "agentic" has been stretched beyond recognition by marketing departments.

The smart approach: start with a specific, well-defined problem. Make sure your data and processes are ready. Pick a use case where mistakes are recoverable. Measure the results. Then decide whether to expand.

That's not as exciting as "autonomous AI agents will transform your business." It's more honest, though. And in my experience, honest assessments lead to better outcomes than hype cycles.

Sources

- Gartner, "Gartner Predicts Over 40% of Agentic AI Projects Will Be Canceled by End of 2027," press release, June 25, 2025. Includes "agent washing" data and vendor count. gartner.com ↑ ↑

- Deloitte, "2025 Emerging Technology Trends" survey of 500 US technology leaders, June-July 2025. Maturity data also referenced in Deloitte's "Agentic AI Strategy," Deloitte Insights, December 2025. deloitte.com ↑

- McKinsey, "The State of AI in 2025," November 2025. Global survey of 1,993 participants in 105 nations. mckinsey.com ↑

- Precisely and Drexel University LeBow College of Business, "2025 Outlook: Data Integrity Trends and Insights," 2025. precisely.com ↑

- Zapier, "State of Agentic AI Adoption Survey," December 2025. Survey of 525 U.S. C-suite executives at companies with 1,000+ employees. zapier.com ↑

- Henry Ford, Ford News, p. 2, November 15, 1922. Verified by The Henry Ford museum archives. thehenryford.org ↑

- Vercel COO Jeanne DeWitt Grosser on agent deployment strategy. tomtunguz.com ↑

Continue Reading

First Principles of AI

Ten foundational principles for evaluating AI claims and making better decisions.

What Is Agentic AI? Nobody Agrees.

Fifteen definitions from fifteen organizations. Here's what we found.

The Unbundling of LLMs

LLM wrappers get dismissed. But the companies building real workflow around foundation models are following the same pattern that created billion-dollar SaaS companies.

Your AI Vendor Claims Their LLM Can Reason. Here's What's Actually Happening.

Every AI vendor claims their LLM can reason. They all run next-token prediction underneath. Here's what that means for capability claims.

Evaluating agentic AI for your business?

I help mid-market companies cut through the buzzwords and figure out what AI can actually do for their operations. Let's talk about what matters.

Let's Talk