Table of Contents

The current consensus on LLM wrappers goes something like this: if your company is just wrapping an existing model with a user interface, you are not building a real business. The model providers will add your features, your margins will collapse, and you will be left with nothing.

A Google executive who leads the company's global startup organization put it plainly in February 2026: "If you're really just counting on the back-end model to do all the work and you're almost white-labeling that model, the industry doesn't have a lot of patience for that anymore."1

He is right about thin wrappers. He is wrong if the conclusion is that the application layer does not matter.

We have been here before.

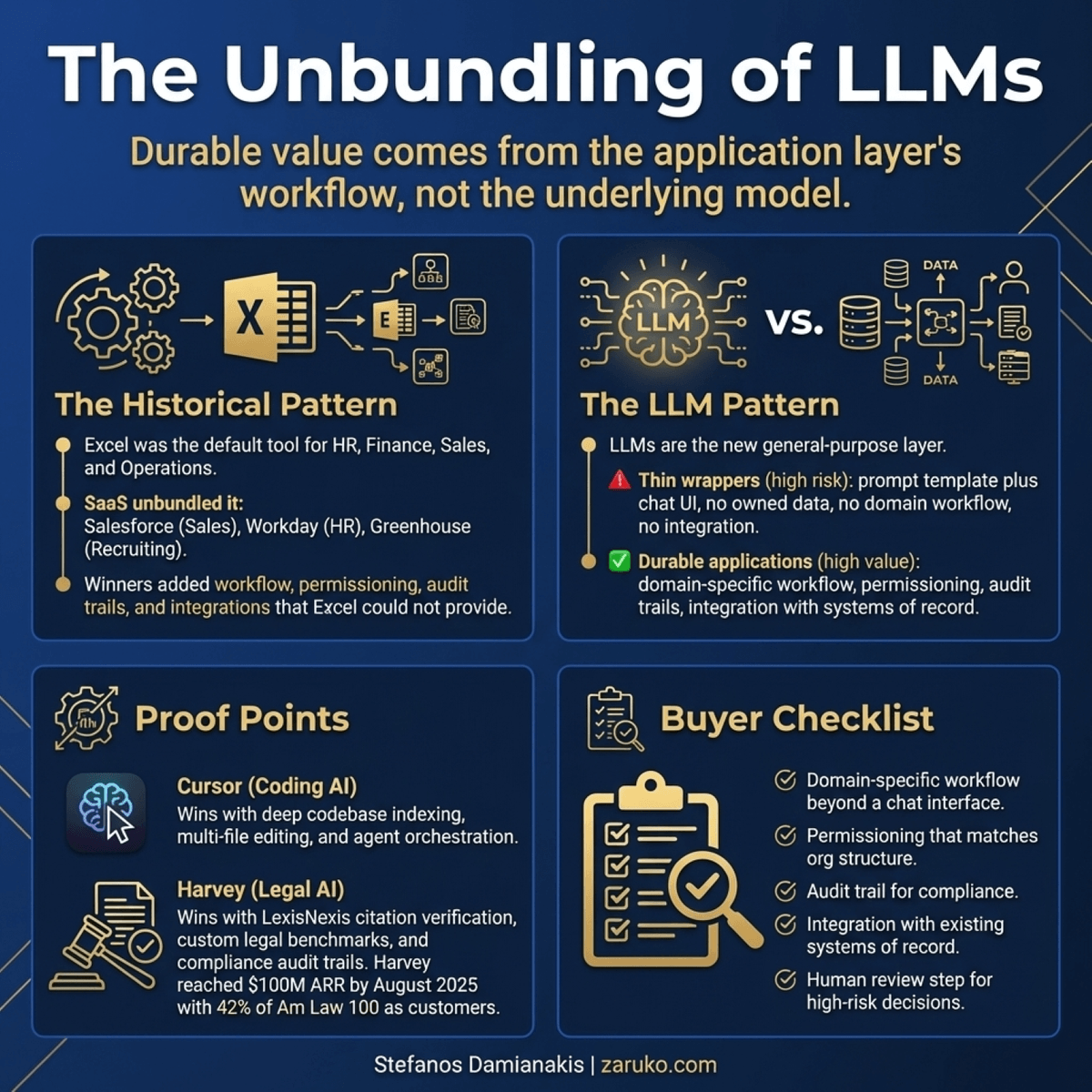

The Unbundling of Excel

For roughly two decades, Excel did everything. Finance teams tracked budgets in it. HR teams managed recruiting pipelines in it. Sales teams ran their entire CRM in it. Operations teams scheduled production in it. If you needed to organize information and run calculations, Excel was the answer, and the question was why you would need anything else.

Then the unbundling happened. Starting in the early 2000s and accelerating through the 2010s, SaaS companies were built around every single function that Excel performed. Salesforce replaced sales spreadsheets. Workday replaced HR spreadsheets. Greenhouse replaced recruiting spreadsheets. Expensify replaced expense spreadsheets. Each of these companies took one thing Excel could do and built an entire product around it.

The concept, which Tomasz Tunguz named "the unbundling of Excel" in a 2017 post, produced billions of dollars in enterprise value.2

But here is what the critics of those early SaaS companies missed. The ones that won did not simply replicate what Excel could do. They added something Excel could not provide: workflow, permissioning, collaboration, audit trails, integrations, and domain-specific structure. You could track recruiting in Excel, but you could not configure it so that hiring managers saw candidate notes while compensation data stayed invisible to them. You could not build a structured interview workflow that triggered automatically at each stage. You could not integrate it with your job boards, your calendar, your background check provider.

The SaaS companies that failed were the ones that truly were just Excel with a nicer interface. The ones that built real workflow around the function did not just survive. They became the standard.

The Unbundling of LLMs Follows a Familiar Pattern

What is happening now with AI resembles that pattern, one level up.

LLMs can address a remarkably wide range of knowledge-work tasks. They can write, summarize, analyze, code, translate, classify, extract, draft, review, and respond. An unusually broad set of knowledge worker functions is technically within reach of the same underlying model. The criticism of LLM wrappers often sounds like the criticism of early SaaS: "Why do you need a special product? The underlying capability is already there."

The answer is the same as it was with Excel. A general-purpose capability, available to everyone, is not the same as a workflow built around that capability for a specific domain. The model is the foundation. In many enterprise categories, the application layer is where durable value gets created.

Cursor is a coding assistant built on top of frontier language models. A skeptic could call it a wrapper around Claude, GPT, or Composer/Kimi K2.5. What Cursor actually built is a development environment with deep codebase indexing, context-aware prompting, multi-file editing, an evaluation framework for model quality on coding tasks, and an agent framework that orchestrates LLM calls for specific programming workflows.3 It emerged as one of the leading AI coding tools in 2025 not because of the underlying model but because of what was built around it.

Harvey is a legal AI platform also built on top of frontier models. By August 2025, Harvey reported more than $100 million in annual recurring revenue, with 42% of the Am Law 100 as customers. It is not struggling from the margin pressure critics predicted for wrappers — and it is one of the few AI companies that can point to real revenue, not just pilots.4 What Harvey built is citation verification connected to LexisNexis, custom legal benchmarks, a development process that embeds legal domain experts throughout product decisions, structured workflows for specific legal tasks like contract review and motion drafting, and an audit trail that meets the reliability bar that legal practice requires. None of that came from the underlying model. All of it came from the application layer.

The Distinction That Actually Matters

Y Combinator partners made this point well: calling an LLM startup a "wrapper" around OpenAI is like calling a SaaS company a "MySQL wrapper." Technically accurate. Completely missing the point.5

Aircall and Talkdesk built billion-dollar businesses on top of Twilio's telephony infrastructure. They did not reinvent how phone calls work. They built workflow, routing, analytics, CRM integration, and team management around that infrastructure. The underlying capability was a commodity. The application layer was the product.

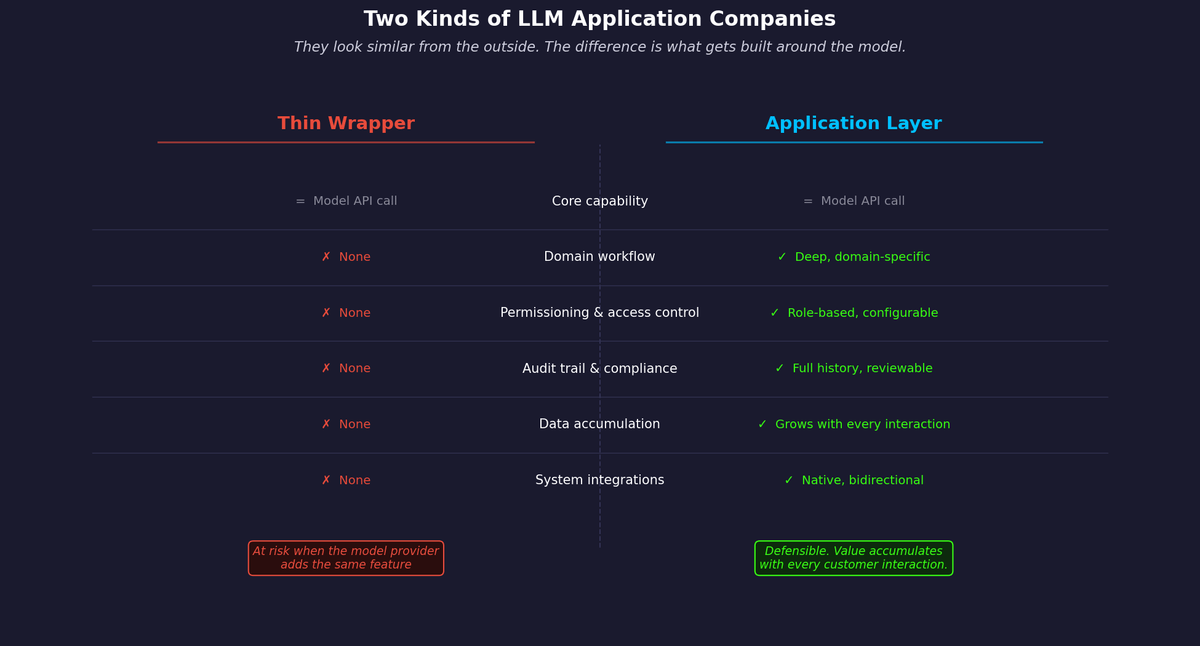

The same distinction applies now. There are two kinds of LLM application companies, and they look similar from the outside.

Figure 1: Two kinds of LLM application companies. They look similar from the outside. The difference is what gets built around the model.

The first kind does the minimum. They take a general-purpose model, add a prompt template and a chat interface, and sell access. They have no owned data. They have no domain-specific workflow. They have no reason to exist that the model provider cannot eliminate with a product update. These companies are clearly at risk, and the criticism is fair.

The second kind builds what the model provider is not building and probably will not build: the domain-specific workflow, the permissioning model, the audit trail, the integration with existing systems of record, the structured output format that a compliance team can actually use, the human review step that a regulated industry requires. This layer is not a commodity. It requires domain knowledge, customer relationships, and product development discipline. It takes years to build, not weeks.

The model providers are moving aggressively into applications. OpenAI, Anthropic, and Google all have application efforts. They will commoditize some of what the thin wrapper companies built. They have historically been less likely to build the deep vertical workflow that Harvey built for BigLaw or that Cursor built for professional developers. The economics of serving every vertical at depth tend not to favor the platform. This is the same dynamic driving the return of point solutions in enterprise AI.

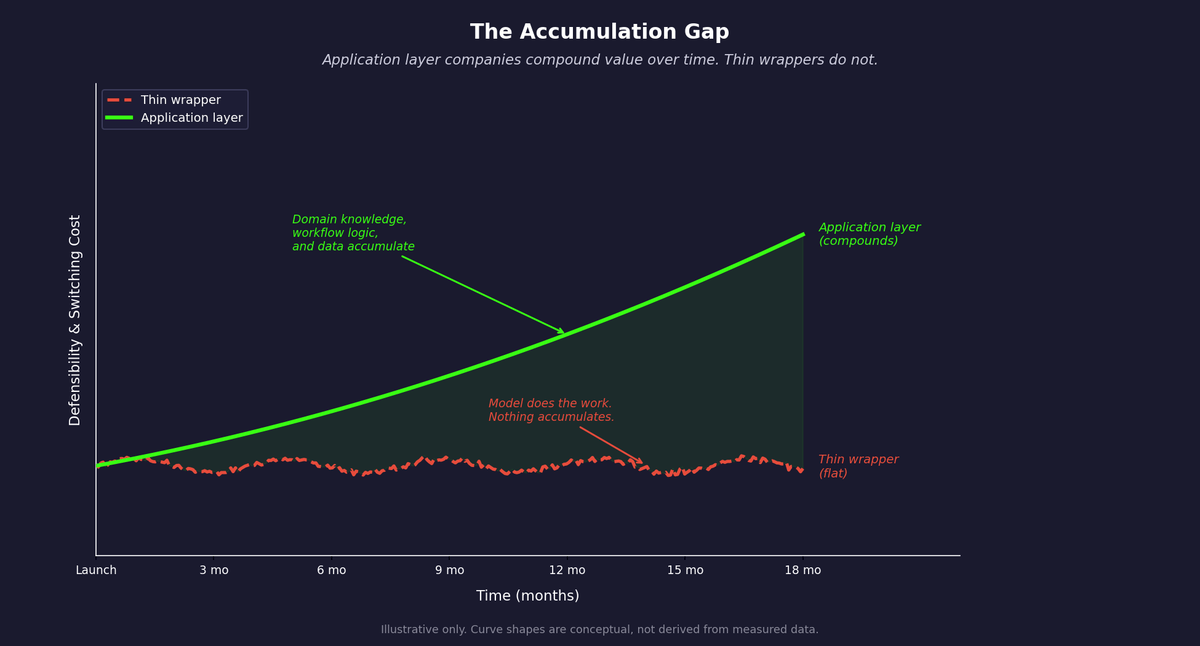

Figure 2: The Accumulation Gap. Application layer companies compound domain knowledge, workflow, and data over time. Thin wrappers do not. Curve shapes are illustrative, not measured — but the direction is well supported by how enterprise software switching costs have historically worked.

The unbundling of Excel did not produce better spreadsheets. It produced applications with domain knowledge, workflows, and data and metadata that compounded over time. Salesforce is not a spreadsheet with more features. It is a system of record that knows your customers, your pipeline, your history. That accumulated knowledge is what makes it hard to leave. The same pattern is playing out now. Cursor accumulates context about your codebase. Harvey accumulates knowledge about your firm's legal workflows and document history. The unbundling of LLMs will produce applications the same way. The ones that win will be the ones that accumulate domain knowledge and data that the underlying model cannot replicate.

What This Means for Enterprise Buyers

If you are evaluating AI vendors, the wrapper question is a useful filter but not a disqualifying one. The right question is not whether the product uses a foundation model. It is what is built around that model.

Does the product have workflow specific to your domain, not just a chat interface that accepts prompts? Does it have permissioning that matches how your organization actually controls information? Does it maintain an audit trail you can use for compliance or review? Does it integrate with the systems your team already uses, or does it require them to change their workflow to adopt it? Does it have a human review step built in for decisions that carry meaningful risk?

A product that answers yes to those questions is not a wrapper in the pejorative sense, regardless of which model runs underneath it. A product that answers no is at risk regardless of how distinct it claims its technology to be.

The unbundling of LLMs is going to create real companies. The same way the unbundling of Excel created Salesforce and Workday and Greenhouse. The ones that survive will be the ones that built genuine workflow around a general-purpose capability, not the ones that just put a better interface on top of it.

Sources

- Darren Mowry, VP Google Startup Organization, quoted in TechCrunch, February 2026. techcrunch.com. ↑

- Tomasz Tunguz, "The Unbundling of Excel," May 2017. tomtunguz.com. Popularized further by Packy McCormick, "Excel Never Dies," Not Boring, March 2021. notboring.co. ↑

- Shrivu Shankar, "How Cursor AI IDE Works," March 2025. blog.sshh.io. ↑

- Takafumi Endo, "How Harvey Built Trust in Legal AI," Medium, 2025. medium.com. ↑

- Y Combinator partners, Lightcone Podcast, referenced in Micro SaaS Bytes, June 2025. medium.com. ↑

Continue Reading

AI Agents Are Bringing Back Point Solutions. This Time, It Might Actually Work.

AI agents are reversing the logic that drove platform consolidation. Here's why point solutions are back, what changed, and how to structure adoption.

74% Want Revenue from AI. 20% Are Getting It.

88% of companies have adopted AI. Fewer than 40% can point to a financial result. The gap between AI winners and everyone else is widening fast.

Build Once, Sell Many. AI Just Broke That.

The software model works because you build once and sell many times. Enterprise data restrictions are breaking that replication engine for AI companies.

Which AI Future Do You Believe In? The Terminator, Wall-E, or Star Wars

Will AI replace your job? BLS projections, OECD surveys, and 224 years of history all point to the same answer.

Evaluating AI vendors for your business?

I help mid-market companies separate real AI applications from thin wrappers. Let's talk about what matters.

Let's Talk