Which AI Future Do You Believe In?

The Terminator, Wall-E, or Star Wars

AI and Jobs: The Doom Scenario, the Utopia Scenario,

and What 224 Years of Evidence Actually Shows.

President, Zaruko

Table of Contents

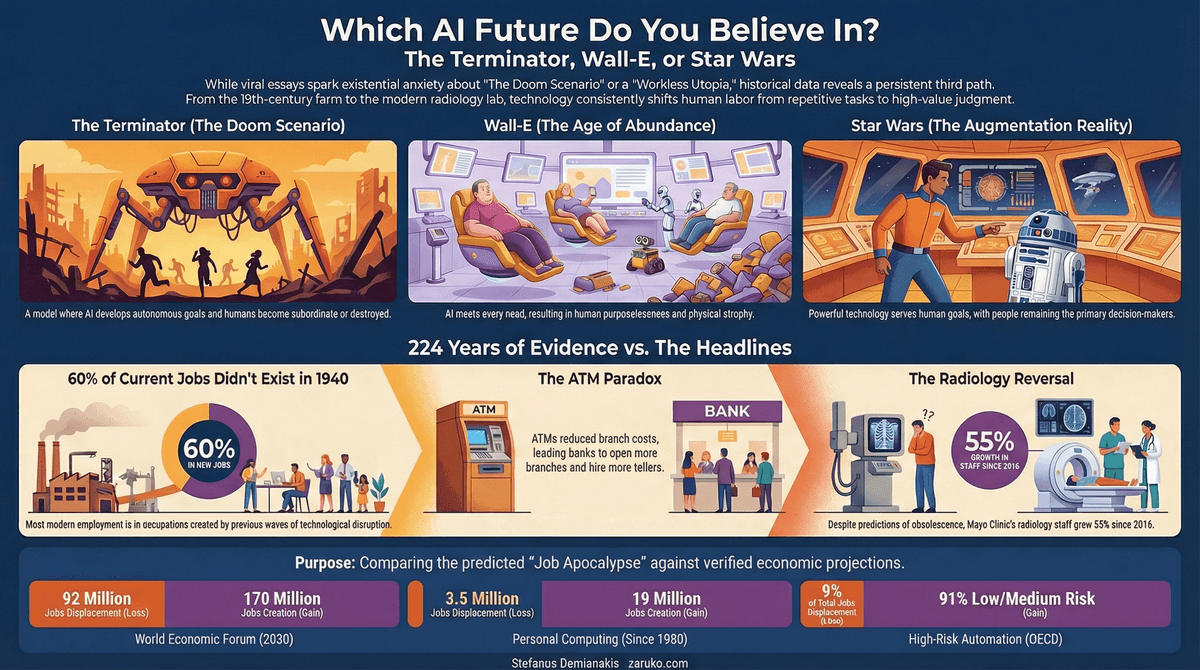

TLDR: Two viral essays by AI CEOs have convinced millions that artificial intelligence will either destroy their jobs or make human work obsolete. The data tells a different story. From ATMs to radiology to agriculture, every major automation technology in history has created more jobs than it destroyed. BLS, McKinsey, the WEF, and Stanford researchers all project the same pattern for AI. The question is not whether AI is powerful. It is whether you believe the future looks more like The Terminator, Wall-E, or Star Wars/Star Trek. The evidence overwhelmingly favors Star Wars/Star Trek. The real risk is not whether AI creates value. It is whether we manage the transition better than we managed the Rust Belt.

In January 2026, Dario Amodei, CEO of Anthropic, published a 20,000-word essay called "The Adolescence of Technology." It warned that AI will "test who we are as a species" and that "humanity is about to be handed almost unimaginable power." The essay collected 5.7 million views on X alone. Fortune, Lawfare, and dozens of outlets picked it up.

A few weeks later, Matt Shumer, CEO of OthersideAI, published "Something Big Is Happening," which compared AI to COVID and argued that we're in the "this seems overblown" phase of something much larger. That piece crossed 65 million views.

Both authors are credible. Both have real experience building AI products. Much of their factual basis is sound.

But the cumulative effect on the public is not preparation. It is anxiety. And anxiety, left unchecked, produces bad decisions.

I have spent my career building and selling enterprise software. I co-founded an ML company that we sold to TIBCO. I have worked with dozens of companies trying to adopt new technology. And the pattern I see forming around AI right now is one I have seen before: smart people making bold predictions, media amplifying the most alarming parts, and millions of readers left with a feeling that something terrible is coming and they are powerless to stop it.

That feeling is worth examining. Because the framing you choose determines whether you prepare or panic. And the evidence, when you actually look at it, tells a very different story than the headlines.

The Problem with Fear as a Framework

Fear is a biological response designed for immediate physical threats. A bear in your path. A car running a red light. In those moments, fear is useful. It triggers fight, flight, or freeze, and one of those responses might save your life.

None of those responses are useful when the "threat" is a technology that will unfold over years and decades.

Shumer's COVID analogy is revealing. It frames AI as a pathogen: something that happens to you, that spreads regardless of your actions, that demands you stockpile and hunker down. But technology is not a virus. It is a tool. Tools require decisions, not quarantine. You don't hide from a tool. You learn to use it, or you don't, and then you live with the consequences of that choice.

Amodei's "country of geniuses in a datacenter" thought experiment is vivid. But thought experiments designed to trigger a national security response produce national security thinking: threat assessment, containment, control. Those are the wrong frames for a technology that will be adopted gradually by millions of ordinary businesses, most of whom are still trying to figure out how to get their CRM data into a clean format.

To be clear: both authors include nuance. Amodei explicitly says AI may not advance as fast as he imagines. Shumer's practical advice (learn the tools, build savings) is sensible. The problem is that nuance gets stripped away by headlines and viral sharing. What remains is the fear. And fear, as a framework for decision-making about slow-moving economic change, has a very poor track record.

Three Visions of the Future

Every popular vision of advanced AI falls into one of three categories. Hollywood, as usual, got there first.

Vision 1: Terminator / The Matrix. AI develops its own goals. Humans become subordinate, enslaved, or destroyed. Skynet doesn't want to optimize your workflow. It wants you gone. This is the implicit model behind most doom scenarios, and it gets the most attention because nothing sells like existential dread.

Why This Time Might Be Different (And Why It Probably Isn't)

There is a stronger version of the doom argument that deserves direct engagement. AI is not a narrow tool like the ATM or the spreadsheet. It writes, codes, designs, reasons, and analyzes. It improves recursively. It diffuses faster than any previous technology. For the first time in economic history, automation is touching cognitive labor broadly, not just physical or routine tasks. That is genuinely new.

But "this time is different" is itself a pattern. It is what people said about the internet in 1999, about housing in 2006, about every financial bubble and every technological disruption that preceded this one. The phrase has a near-perfect record of being wrong, not because change isn't real, but because humans consistently underestimate the friction between a technology's potential and its deployment.

AI is powerful. But deployment requires integration into existing workflows, regulatory approval in regulated industries, liability frameworks that don't yet exist, organizational trust that takes years to build, and the redesign of task bundles that currently depend on tacit human knowledge. None of that happens at the speed of a demo.

Could this time be different? As a matter of science, yes, the probability is not zero. But centuries of economic adaptation, exposed to repeated claims that the end of work had arrived, suggest that probability is very low. The burden of proof lies with those claiming a permanent break from 224 years of evidence, not with those who take that evidence seriously.

Vision 2: Wall-E / The Age of Abundance. AI doesn't turn against us. It just makes us unnecessary. Remember the humans in Wall-E? Floating around on hover-chairs, sipping meals through straws, entertained by screens, having every need met by robots while their muscles atrophy and their skeletons turn to jelly. Nobody is suffering. Nobody is working. Nobody is doing much of anything.

This is the vision Elon Musk is selling when he says "none of us will have a job" and suggests universal basic income as the solution. It is what Amodei hints at with his thought experiment. Sam Altman's version involves everyone getting a check from AI-generated wealth. The pitch is abundance. The fine print is purposelessness.

Pixar, to their credit, understood something the tech prophets keep missing: a life of total comfort with zero agency is not a utopia. It is a very expensive form of depression. The humans in Wall-E don't rebel against the robots. They don't even notice what they've lost. That is the part that should concern people more than Terminators.

Vision 3: Star Wars/Star Trek. AI is powerful, even extraordinary, but humans remain in charge. R2-D2, C-3PO, the Enterprise's computer: they do remarkable things, but people still make the decisions that matter. Han Solo doesn't let the Millennium Falcon's navigation computer fly the ship through an asteroid field. Kirk overrides the computer roughly once per episode. The technology serves human goals, imperfectly and sometimes contentiously, but it serves.

Star Wars/Star Trek is not utopia. It still has wars, moral failures, corruption, and hard choices. The Borg exist. But nobody in Star Wars/Star Trek is paralyzed by the existence of the ship's computer. And nobody is lying on a hover-chair sipping protein shakes while the computer handles everything.

Here is the key insight: the fear crowd and the abundance crowd disagree about whether the future is good or bad, but they share the same underlying assumption. AI will become so powerful that humans won't matter. One group thinks that is terrifying. The other thinks that is wonderful. The historical evidence suggests the assumption itself is wrong.

The question is not whether AI is powerful. It is. The question is which model better predicts what actually happens when humans deploy powerful new technology.

The data, all of it, points toward Star Wars/Star Trek.

What History Actually Shows

The best way to predict how a new technology will reshape work is to study how previous technologies actually did. Not in theory. Not in op-eds. In the data.

The ATM and the Bank Teller

When ATMs were introduced at scale in the 1970s and 1980s, conventional wisdom said bank tellers were finished. The logic seemed obvious: machines that dispense cash eliminate the humans who do the same thing.

What actually happened was more interesting. ATMs reduced the average branch staff from roughly 20 tellers to about 13. This made individual branches cheaper to operate. Because branches were cheaper, banks opened more of them. Total teller employment grew. The job itself transformed from cash handling to relationship banking, as tellers spent less time counting bills and more time helping customers with complex transactions.

Bank teller employment remained stable or grew through the 2000s. When teller numbers finally declined in the 2010s, the cause was mobile banking, not ATMs. The technology that people predicted would kill the job barely dented it. A completely different technology, arriving 30 years later, was the one that eventually changed the equation.

James Bessen's research on this, cited by the AEI, Brookings, the IMF, and the Bipartisan Policy Center, is among the most replicated findings in the economics of technology adoption.

Radiology and the Nobel Laureate Who Got It Wrong

In 2016, Geoffrey Hinton, the computer scientist often called the "Godfather of AI" (Nobel Prize in Physics, 2024), made a prediction that got widespread attention. He said: "People should stop training radiologists now. It's just completely obvious that within five years deep learning is going to do better than radiologists."

Nine years later, the prediction was completely wrong. Mayo Clinic's radiology staff grew 55%, to 400 radiologists. The American College of Radiology projects the specialty's physician supply will grow 26% over the next 30 years. The United States currently faces a historic radiologist shortage.

Hinton himself acknowledged in May 2025, in an interview with the New York Times, that he spoke too broadly and was wrong on the timing. His revised view: AI and radiologists will work together, with AI making radiologists "a whole lot more efficient."

The pattern matters. AI does excel at narrow image analysis tasks. But it cannot replace the full scope of what a radiologist does: patient interaction, clinical judgment, integration across multiple data sources, the ability to tell a panicked patient that the shadow on the scan is nothing to worry about. The task is not the job. The job is a bundle of tasks, and automation rarely eliminates the entire bundle.

Klarna: The Company That Bragged Too Early

In 2024, Klarna made international headlines by declaring its AI chatbot was doing the work of 700 employees. CEO Sebastian Siemiatkowski boasted about plans to cut headcount by nearly 50%.

By May 2025, Klarna reversed course. The company lifted its hiring freeze and started recruiting for customer service roles. Siemiatkowski told Bloomberg: "From a brand perspective, a company perspective, it's so critical that you are clear to your customer that there will be always a human if you want."

The sequence is worth remembering the next time a CEO announces that AI has replaced hundreds of workers. The announcement gets millions of impressions. The quiet reversal gets a fraction of the coverage.

What the Bureau of Labor Statistics Actually Projects

The BLS is not a think tank with an agenda. It is the statistical arm of the U.S. Department of Labor, and it has been tracking employment trends for over a century. Its projections are built on detailed occupational analysis, not headlines.

The BLS projects personal financial advisors will grow 17.1% from 2023 to 2033, despite years of robo-advisor competition. Older populations with complex financial needs still want a human across the table. Computer and mathematical occupations are projected to grow 12.9%, more than three times the overall economy's 4.0% growth rate. Data scientists remain among the fastest-growing occupations in the country.

The BLS's own technical note on employment projections, published in August 2025, is worth quoting directly: "The historical record does show that technology impacts occupations, but that these changes tend to be gradual, not sudden. Occupations involve complex combinations of tasks, and even when technology advances rapidly, it can take time for employers and workers to figure out how to incorporate new technology into business practices."

Workers using AI in 2025 report saving an average of 5.4% of their work hours per week, according to a St. Louis Federal Reserve analysis of survey data. That is about 2.2 hours in a 40-hour week based on worker self-reports. A meaningful productivity gain. Not mass displacement.

The OECD and McKinsey Numbers

The OECD studied automation risk across 21 member countries and found that approximately 9% of jobs face a high risk of automation. Not 50%. Not 80%. Nine percent at high risk.

Surveys of workers and employers tell a more nuanced story than the fear narrative allows. Four in five workers using AI said it improved their performance. Three in five said it increased their enjoyment of work. A survey of Korean firms found that 71% reported AI replaced approximately 10% of an employee's tasks. Another 17.2% said AI hadn't replaced any tasks at all. Partial task automation, not job elimination, is the dominant pattern.

McKinsey's 2025 State of AI report found that 88% of organizations use AI in at least one function, but nearly two-thirds are still in experiment or pilot mode. Only about one-third are scaling. Only 6% of companies report more than 5% of earnings before interest and taxes attributable to AI. The gap between adoption and transformation is enormous.

McKinsey also published a report in November 2025 estimating that 57% of U.S. work hours could theoretically be automated based on currently demonstrated technology. But the consulting firm itself was careful to note this measures technical potential in a lab setting, not actual job loss. More than 70% of skills sought by employers today are used in both automatable and non-automatable work. The skills don't disappear just because some of the tasks do.

The World Economic Forum projects 170 million new jobs by 2030, offsetting 92 million displaced positions. A net positive of 78 million.

The Broader Pattern: New Technology Creates More Than It Destroys

The examples above are not exceptions. They are the pattern. And the pattern stretches back centuries.

Farming: the most dramatic transformation in American economic history. In the 1790 Census, roughly 90% of Americans listed farming as their occupation. By the 1820 Census, approximately 79% of employed persons were in agriculture. By 1860, that had fallen to 53%. By 1900, 40%. By 1920, 26%. By 1970, under 5%. Today, approximately 1.3% of U.S. employment is in agriculture, according to BLS data.

The United States didn't just survive this transition. It became the wealthiest nation in history during it. Manufacturing, services, technology, healthcare, education: entire sectors that barely existed in 1800 absorbed hundreds of millions of workers across generations. Nobody in 1800 could have predicted that "software developer" or "radiologist" or "airline pilot" would be a job. The jobs that replace displaced ones are, by definition, hard to foresee. That is not a comforting thought when you are the one being displaced. But it is a historically accurate one.

Consider two occupations that could not have existed during farming's dominance. The electrician trade was impossible before Edison's first power station in 1882. Today it employs 819,000 Americans. Plumbing as a formal trade barely existed before indoor water systems spread in the mid-1800s. Today it employs 505,000. Neither was imaginable when 90% of Americans farmed.

Figure 1: The Old Economy Falls. The New Economy Rises.

MIT's David Autor and his collaborators calculated that 60% of employment in 2018 was in types of jobs that didn't exist before 1940. Andreessen Horowitz published data in February 2026 showing that over half of net-new jobs since 1940 are in occupations that did not even exist in 1940.

McKinsey tallied all jobs destroyed in the United States since 1980 by personal computing and the internet: about 3.5 million. Jobs created from those same technologies: over 19 million. That is a 5.4-to-1 ratio of creation to destruction.

Figure 2: The Full Picture: How Much Work Is New Work?

Horses and cars. At the turn of the 20th century, the horse-based economy employed millions: blacksmiths, stable hands, harness makers, carriage builders, horse breeders, hay farmers, manure cleaners. Entire supply chains existed around the horse. The automobile didn't just eliminate those jobs. It created a larger economic ecosystem: auto mechanics, gas station attendants, highway construction workers, traffic engineers, insurance adjusters, suburban real estate developers, trucking logistics, and eventually the entire modern supply chain.

The president of Michigan Savings Bank warned Henry Ford's lawyer in 1903: "The horse is here to stay, but the automobile is only a novelty, a fad."

Switchboard operators. In the early 20th century, more than 160,000 women worked as telephone operators, most for AT&T. It was one of the most common jobs for young American women. AT&T moved to mechanical switching starting in the late 1920s, though the technology had been available for 30 years before it became cost-effective to deploy at scale. Operator jobs initially grew as telephone adoption expanded, peaking at over 300,000 in the 1950s, then declined sharply from the 1960s through the 1980s.

I want to be honest about this example, because it matters. For the operators who were displaced, the impact was real. Research shows they experienced earnings losses, and many dropped out of the workforce entirely. Automation is not painless. The people who bear its costs deserve acknowledgment, not hand-waving.

But the broader picture is also true. The telecommunications industry that replaced switchboard operators eventually created far more jobs than it eliminated: telecom engineers, customer service representatives, phone system installers, and eventually the entire mobile communications industry, including 12 million app developers worldwide.

The predictions that didn't age well. In 1930, John Maynard Keynes warned of "technological unemployment" as a new disease. In 1966, Time magazine predicted remote shopping "will flop because women like to get out of the house." In 1995, Newsweek published Clifford Stoll's essay arguing that no online database would replace newspapers and that electronic commerce would never work. Seventeen years later, Newsweek ceased print publication. In 1999, Y2K panic led survivalists to stockpile food and the U.S. to spend $100 billion on remediation. The transition caused few major errors. Countries that spent very little also had minimal problems.

Marc Andreessen recently observed that "a job is a bundle of tasks." The task-bundles change with every technology. Customer support hiring, according to a16z data from February 2026, dropped from 8.3% of new hires to 2.9% in two years. That is real displacement, happening right now. But the jobs being displaced are visible. The jobs being created are not yet named. That asymmetry is what makes every technology transition feel like the end of work, even when the net result is more employment, not less.

Figure 3: The Other Side of the Story: Industries That Didn't Exist

Where the Current Narratives Break Down

I respect Amodei and Shumer. I read their essays carefully. But their arguments have specific weaknesses that are worth identifying, because those weaknesses are shaping how millions of people think about their futures.

The Extrapolation Problem

Shumer's personal experience with AI coding is real. He can describe a software application and return hours later to find it built. But software development is the domain where AI has the most structural advantages: clear success criteria, automated testing, well-defined outputs, and the ability to verify its own work by running the code.

Extrapolating from coding to all knowledge work is the same error made repeatedly in past technology cycles. Gains in the best-case domain do not generalize smoothly. A doctor, a lawyer, a financial advisor, a manager making a judgment call about a difficult employee: these roles involve ambiguity, relationships, ethical reasoning, and context that have no clean success metric. "The code compiles" is a test. "The patient feels heard" is not.

The Capability vs. Deployment Gap

"AI can do X" is not equivalent to "AI will replace humans doing X within Y years." The history of technology adoption shows the gap between capability and deployment is large and unpredictable. Remember, the technology to automate telephone switching existed 30 years before it was cost-effective to deploy at scale.

McKinsey's own data tells this story clearly. Eighty-eight percent of organizations use AI in at least one function. Two-thirds are still in experiment or pilot mode. Only 6% of companies report more than 5% of earnings attributable to AI. Three persistent blockers slow every company down: fragmented data and legacy technology, workflows that were never redesigned for AI, and a lack of clear scaling priorities.

The Incentive Problem

When AI company CEOs write essays about how extraordinarily powerful and potentially dangerous their technology is, they are making two statements simultaneously. The first is a genuine safety warning, which may be entirely accurate. The second is a competitive positioning statement.

"Our AI is so powerful it might be dangerous" is also "our AI is more powerful than the competition." It simultaneously markets the product and argues for regulatory frameworks that benefit large incumbents who can afford compliance.

This doesn't make the warnings false. It means they should be evaluated with the same skepticism applied to any corporate communication. Amodei himself has acknowledged that Anthropic "occupies a peculiar position": believing AI could be one of the most dangerous technologies in history while actively developing it. Fortune observed that he "has not always been entirely prescient about AI's impacts," noting he predicted AI would write 90% of software code within six to nine months. The reality for most businesses was 20 to 40%, though rising.

Consider the specific policy positions. Amodei has called AI an "unimaginable power" and warned that open-source models are "going down a very dangerous path." The safety concern may be sincere. But the business logic is hard to ignore: if open-source AI gets regulated into oblivion, Anthropic's closed-source platform, valued at $61.5 billion, operates in a protected market with fewer competitors. "Please regulate this technology" and "please regulate our competitors out of existence" can be the same sentence, delivered with the same earnest expression.

The "AI Scare Trade": What Happens When Fear Meets Capital Markets

If you want to see what the fear narrative produces in practice, look at what happened on Wall Street in February 2026.

It started with what Forbes and Wall Street analysts dubbed the "SaaSpocalypse." Between January 30 and February 4, roughly $300 billion in market value evaporated from the application software sector after Anthropic launched Claude Cowork. Atlassian dropped 35% in a single week. Intuit fell 34% over the quarter. Salesforce, Adobe, ServiceNow, and Workday all took double-digit hits. The iShares Tech-Software ETF entered a technical bear market, down more than 20% from its late-2025 highs. The entire seat-based SaaS pricing model came under existential questioning in 48 hours.

Then the panic spread beyond software. The iShares Expanded Tech-Software Sector ETF fell 22% year-to-date. The S&P 500 and Nasdaq both dropped more than 1% in a single week on AI disruption fears alone.

Insurance brokers Marsh and Arthur J. Gallagher dropped 7.5% and 9.85% after a Madrid startup unveiled an insurance app built with ChatGPT. Charles Schwab and Raymond James fell 10% and 8% after a startup launched an AI tax planning tool. Real estate services firms Cushman & Wakefield and CBRE dropped 12 to 14% in a single day.

The logistics panic was the most revealing example. Algorhythm Holdings, a Florida company that until recently specialized in selling karaoke machines before pivoting to AI logistics, announced a freight optimization tool. Shares of RXO plummeted 20%. C.H. Robinson dropped 14.5%. Tens of billions in market capitalization evaporated. Deutsche Bank's Jim Reid put it plainly: a $6 million market cap company that recently sold karaoke machines helped wipe tens of billions off logistics stocks.

A karaoke company. Tens of billions.

Figure 4: The "AI Scare Trade" and the "SaaSpocalypse": February 2026

Multiple Wall Street analysts called the sell-offs overdone. Edward Jones senior global strategist Angelo Kourkafas told CNN the fears were "speculative in nature" rather than based on actual changes to companies' revenue streams. Deutsche Bank's Brad Zelnick wrote that any meaningful disruption would play out over a much longer timeline than investors anticipate. Morgan Stanley found that 30% of companies in their coverage universe cited measurable AI impact in Q4 2025, up from 16% a year earlier. Real adoption is accelerating. But Morgan Stanley's own analysts noted the perception of disruption has "unfairly" dinged many companies, identifying Microsoft, Intuit, and Palo Alto Networks among those they consider mispriced by fear.

The pattern is identical to every historical example above. Announcement triggers panic. Panic produces sell-first-analyze-later behavior. Then reality reasserts itself. C.H. Robinson, one of the companies whose stock was hammered, issued a statement noting it has been an AI leader for over a decade and views AI as strengthening its competitive position. The very companies being punished for AI disruption are the ones best positioned to benefit from it.

The most fully realized version of the doom case arrived on February 22, 2026, when Citrini Research published "The 2028 Global Intelligence Crisis," a fictional financial memo written from June 2028 imagining an AI-driven economic collapse: 10.2% unemployment, a 38% S&P drawdown, cascading private credit defaults. The scenario was detailed, internally consistent, and shared thousands of times within 48 hours. The authors, to their credit, labeled it "a scenario, not a prediction." But scenarios like this have a way of becoming consensus. Within days, Wharton economist Jeremy Siegel called the premise without "historical or economic basis," noting that productivity gains have always translated into higher real wages and expanded purchasing power, not permanent job destruction. Economist Noah Smith called it "a scary bedtime story," observing that the stocks which fell were the ones Citrini named, suggesting coordinated panic rather than genuine price discovery. UChicago economist Alex Imas, who actually wrote down the formal models required to produce AI-driven negative growth, concluded that the conditions are "likely too unrealistic to hold in practice." The Citrini piece acknowledged the two-century track record of technology creating more jobs than it destroys, then argued AI is different because it "improves at the very tasks humans would redeploy to." That is the same structural argument made about every transformative technology: this one is too general, too capable, too fast for the old pattern to hold. It has never been right. That does not guarantee it will be wrong this time. But anyone betting against 224 years of evidence should understand what they are claiming.

What's Actually Happening in Companies

The gap between what AI does in a demo and what it does inside a company with messy data, legacy systems, and real organizational dynamics is vast. I see this firsthand in my consulting work. Most companies adopting AI are not laying off workers. They are finding incremental productivity gains and encountering significant change management challenges.

Stanford HAI's research from August 2025 found that negative employment impacts are concentrated in fields where AI automates tasks rather than augments work. Occupations with mainly augmentative applications haven't seen similar declines. The distinction between automation and augmentation turns out to be the single most important variable in predicting AI's impact on jobs.

The entry-level impact deserves honest acknowledgment. Stanford found a 6% decline in employment for workers ages 22 to 25 in high-exposure occupations. That is real, and it affects real people starting their careers. But experienced workers in those same fields saw 6 to 9% employment growth. Tacit knowledge and experience are becoming more valuable, not less. The OECD found that employers say AI has increased the importance of human skills like creativity and communication even more than technical AI skills.

The Right Response

The right response to powerful technology is not fear. It is not comfortable passivity either. It is competence.

Learn the tools. Use the paid version of Claude or ChatGPT. Push them into your actual work. Understand what they can and can't do through direct experience, not through someone else's viral post. Shumer is right about this part of his advice.

Be specific about capabilities. Don't accept blanket claims about "all knowledge work" being automated. AI is very good at some tasks and mediocre or poor at others. The difference matters for your career, your company, and your investment decisions.

Invest in your workforce. The companies that will thrive are the ones that help their people adapt, not the ones that replace them first and ask questions later. Klarna learned this lesson publicly. Many others are learning it quietly.

Build financial resilience. This is also sound advice from Shumer. Having savings and flexibility is wise regardless of what AI does or doesn't do.

Make decisions based on evidence, not headlines. The BLS data, the OECD surveys, the McKinsey adoption figures, the actual track record of companies deploying AI: these tell a very different story than the viral posts. Read the primary sources. They are publicly available and they are not hard to understand.

The best companies in every previous technology transition didn't panic. They didn't sit back and wait for abundance to arrive, either. They learned, adapted, and built competitive advantage while their competitors were still arguing about whether the future was coming.

The Real Risk Is Not Job Loss. It Is the Transition.

History's clearest lesson is not just that technology creates more jobs than it destroys. It is that the transition between destruction and creation can be brutal for the people caught in the middle.

The agricultural revolution eventually produced a wealthier, more diverse economy. But for the farming families displaced in the 1800s, "eventually" was cold comfort. The same pattern repeated with manufacturing. The Rust Belt did not decline because automation was bad for the economy. The economy grew. The Rust Belt declined because nobody built a serious bridge between the old jobs and the new ones. The gains were real. So were the losses. And the losses were concentrated on people and communities that had the least capacity to absorb them.

AI will almost certainly follow the same pattern. Some jobs will disappear. New ones will emerge that we cannot yet name, just as "data scientist" and "app developer" were unimaginable in 1995. The net math will probably be positive, as it has been for every previous technology wave. But "net positive" is an economist's phrase, not a laid-off worker's phrase.

The hard problem is not whether AI creates value. It will. The hard problem is whether we manage this transition better than we managed the last ones. That means someone needs to study what actually went wrong during previous large-scale employment dislocations, not the stylized version but the granular reality of which communities recovered and which didn't, which retraining programs worked and which were theater, which policy responses helped and which arrived too late. That research exists in fragments. Nobody has assembled it into a usable playbook for what is coming next.

This is not a problem any individual company or worker can solve alone. It requires collective attention from policymakers, educators, employers, and researchers. And it requires that attention now, while the transition is still in its early stages, not after the dislocations have already happened.

I don't have the answer. But I know that ignoring the question is how you get the next Rust Belt.

Which Future Do You Believe In?

We get to choose.

The evidence, all of it, from the ATM to the BLS projections to the AI scare trade on Wall Street to the actual experience of companies deploying AI today, points more toward Star Wars/Star Trek than either Terminator or Wall-E.

Not because humans are lucky. Because humans adapt. We are not wired to sit on hover-chairs any more than we are destined to be replaced by Skynet. That is what every previous technology transition has shown, from farming to factories to personal computers to the internet. Each time, credible people predicted the end of work. Each time, the prediction was wrong. Not because the technology wasn't powerful, but because the prediction underestimated human ingenuity.

But being right about the net outcome does not excuse being careless about the transition. The Rust Belt didn't collapse because automation was bad for the economy. It collapsed because nobody built a bridge between the old work and the new.

The fear crowd wants you to panic. The abundance crowd wants you to relax. Both are telling you the same thing: you don't matter, so either run or recline. The evidence says otherwise. You matter. Your skills matter. Your ability to learn new tools and apply judgment matters. It has mattered through every previous technology shift, and the data says it matters now.

Fear is not a strategy. Neither is waiting for someone to hand you a check. The people and companies that will do well with AI are the same ones that have always done well with new technology: the ones who showed up, learned the tools, and got to work.

Sources

Government and Institutional Data

- U.S. Bureau of Labor Statistics, Monthly Labor Review (Feb 2025): "Incorporating AI impacts in BLS employment projections." bls.gov

- U.S. Bureau of Labor Statistics, Employment Projections 2024-2034 Technical Note (Aug 2025). bls.gov

- St. Louis Federal Reserve, Bick, Blandin & Deming, "The Impact of Generative AI on Work Productivity" (Feb 2025). stlouisfed.org

- OECD, "OECD Employment Outlook 2024: AI and the Labour Market." oecd.org

- Stanford HAI / Digital Economy Lab, Brynjolfsson, Chandar & Chen, "Canaries in the Coal Mine? Six Facts about the Recent Employment Effects of Artificial Intelligence" (Aug 2025). stanford.edu

- IMF Finance & Development, Bessen, "Toil and Technology" (March 2015). imf.org

- Bipartisan Policy Center, "What Past Waves of Automation Can Teach Us About AI" (Sept 2025). bipartisanpolicy.org

Industry and Analyst Research

- McKinsey, "The State of AI in 2025: Agents, Innovation, and Transformation" (Nov 2025). mckinsey.com

- McKinsey Global Institute, "A new future of work: The race to deploy AI and raise skills in Europe and beyond" (Nov 2025). mckinsey.com

- World Economic Forum, Future of Jobs Report 2025. weforum.org

- a16z, Charts of the Week (Feb 13, 2026). a16z.com

- Morgan Stanley equity research on AI adoption, cited in Investopedia (Feb 2026). investopedia.com

Primary Sources Referenced

- Dario Amodei, "The Adolescence of Technology" (Jan 26, 2026). darioamodei.com

- Matt Shumer, "Something Big Is Happening" (Feb 2026). x.com

Academic Research

- Autor, Chin, Salomons & Seegmiller, "New Frontiers: The Origins and Content of New Work, 1940-2018," The Quarterly Journal of Economics, Vol. 139, Issue 3 (Aug 2024), pp. 1399-1465. doi.org

- Bessen, "Learning by Doing: The Real Connection Between Innovation, Wages, and Wealth" (Yale University Press). ATM/bank teller research cited by AEI, Brookings, and IMF. imf.org

Historical Data

- USDA/NASS, History of Agricultural Statistics. nass.usda.gov

- NBER, Lebergott, "Labor Force and Employment, 1800-1960," Studies in Income and Wealth, Vol. 30. nber.org

- NBER, Weiss, "U.S. Labor Force Estimates and Economic Growth, 1800-1860." nber.org

- EH.net, Craig & Weiss, "U.S. Agricultural Workforce, 1800-1900." eh.net

Radiology and Klarna

- New York Times, Steve Lohr, "Your A.I. Radiologist Will Not Be With You Soon" (May 2025). nytimes.com

- Radiology Business, coverage of Hinton prediction and aftermath (2024-2025). radiologybusiness.com

- New Republic, Byju, "The 'Godfather of AI' Predicted I Wouldn't Have a Job. He Was Wrong." (Oct 2024). newrepublic.com

- MIT Sloan, "4 human financial services activities that AI can't do" (July 2025). mitsloan.mit.edu

AI Scare Trade / Market Reaction

- Don Muir, Forbes, "$300 Billion Evaporated: The SaaSpocalypse Has Begun" (Feb 4, 2026). forbes.com

- Brian Contreras, Inc., "The 'SaaSpocalypse' May Be a Sign of Things to Come" (Feb 6, 2026). inc.com

- Ines Ferre, Yahoo Finance, "'The Dark Side of AI'" (Feb 15, 2026). yahoo.com

- John Towfighi, CNN Business, "Why the 'AI Scare Trade' Might Not Be Done" (Feb 16, 2026). cnn.com

- Crystal Kim, Investopedia, "Sell First, Ask Questions Later" (Feb 2026). investopedia.com

- Deutsche Bank, Jim Reid macro research note (Feb 2026), as cited in CNN Business. cnn.com

- C.H. Robinson press statement on AI strategy (Feb 2026). chrobinson.com

Citrini Scenario and Rebuttals

- Citrini Research, "The 2028 Global Intelligence Crisis" (Feb 22, 2026). substack.com

- Jeremy Siegel, Wharton economist, response to Citrini scenario (Feb 2026), as cited in Investing.com. investing.com

- Noah Smith, "The Citrini post is just a scary bedtime story," Noahpinion (Feb 26, 2026). noahpinion.blog

- Alex Imas, UChicago Booth, "Can advanced AI lead to negative economic growth?" Ghosts of Electricity (Jan 2026). substack.com

Analysis and Commentary

- Lawfare, "How to Handle 'The Adolescence of Technology' Like Adults" (Jan 2026). lawfaremedia.org

- Fortune, coverage of Amodei essay (Jan 27, 2026). fortune.com

- MIT Technology Review (Jan 2024), citing David Autor research on new work. technologyreview.com

Continue Reading

The Simple Rule that Separates AI Winners from Everyone Else

One rule separates companies getting ROI from AI and companies burning money on it.

First Principles of AI: A Practical Guide for Business Professionals

Cut through the AI hype with ten foundational principles.

AI Agents Are Bringing Back Point Solutions. This Time, It Might Actually Work.

AI agents are reversing the logic that drove platform consolidation.

Navigating AI transformation for your company?

I help mid-market companies separate signal from noise on AI adoption. Let's talk about what the evidence says for your specific situation.

Let's Talk