The Orchestration Layer: What It Is, What It Does, and What to Look For

President, Zaruko

Table of Contents

In our last post, we made the case that AI agents are bringing point solutions back to the enterprise. The economics have shifted. Focused agents that do one thing well are outperforming the corresponding modules inside platform suites, and orchestration is absorbing the complexity cost that killed best-of-breed strategies a decade ago.

But we left something critical undefined. We said "orchestration" over a dozen times without explaining what it actually is. If you are a business leader evaluating AI agent strategy, that gap matters. You cannot evaluate what you do not understand, and vendors are counting on that ambiguity to sell you things you may not need.

This post fixes that. No jargon. No architecture diagrams for engineers. Just the functional components of an orchestration layer, what each one does, and what to look for when you are evaluating solutions.

What an Orchestration Layer Actually Is

Think of a traditional business process that spans multiple departments. A customer returns a product. Customer service logs the return, the warehouse receives the item, inventory gets updated, accounting processes the refund, and analytics records the reason. In most companies, this process is held together by workflow rules, shared databases, and a lot of human coordination.

Now replace each of those steps with an AI agent. The customer service agent handles the return request. The logistics agent coordinates the pickup. The inventory agent updates stock levels. The finance agent processes the refund. The analytics agent categorizes the return reason and looks for patterns.

The orchestration layer is the system that makes all of these agents work together. It decides which agent handles what. It passes context from one agent to the next. It enforces rules about what each agent is allowed to do. It monitors the whole process and intervenes when something goes wrong.

This distinction matters more than it might seem. An AI agent is not just a model. It is a system built around a model: the workflows, the memory, the tool connections, the rules, and the coordination logic that turn a language model into something that can actually do work. The model is the reasoning engine. The orchestration layer is what makes that engine useful in a business context. Without it, you have five agents operating in five silos. With it, you have a coordinated system that handles a complex business process end to end.

The Five Core Functions

Every orchestration layer, whether it is built into a platform or assembled from standalone tools, performs five core functions. The quality and maturity of these functions is what separates solutions that work in production from those that stall in pilot.

What may surprise you is that no industry standard exists for these functions. The major platform providers each define orchestration differently, and none offer a unified functional framework. Anthropic publishes design patterns (prompt chaining, routing, parallelization, orchestrator-workers) but explicitly avoids prescribing a layered architecture, advising developers to "start with LLM APIs directly." OpenAI's Agents SDK defines primitives: Agents, Handoffs, Guardrails, Tools, Sessions, and Tracing, building blocks you assemble yourself. Google's Agent Development Kit takes a workflow-centric approach with Sequential, Parallel, and Loop agents plus LLM-driven routing. Microsoft's Agent Framework (merging Semantic Kernel and AutoGen) offers orchestration patterns: Concurrent, Sequential, Handoff, and Group Chat. Each is useful. None answers the question a business leader actually needs answered: what functions does the orchestration layer need to perform, regardless of which SDK or platform you choose? The five functions below are our answer to that question.

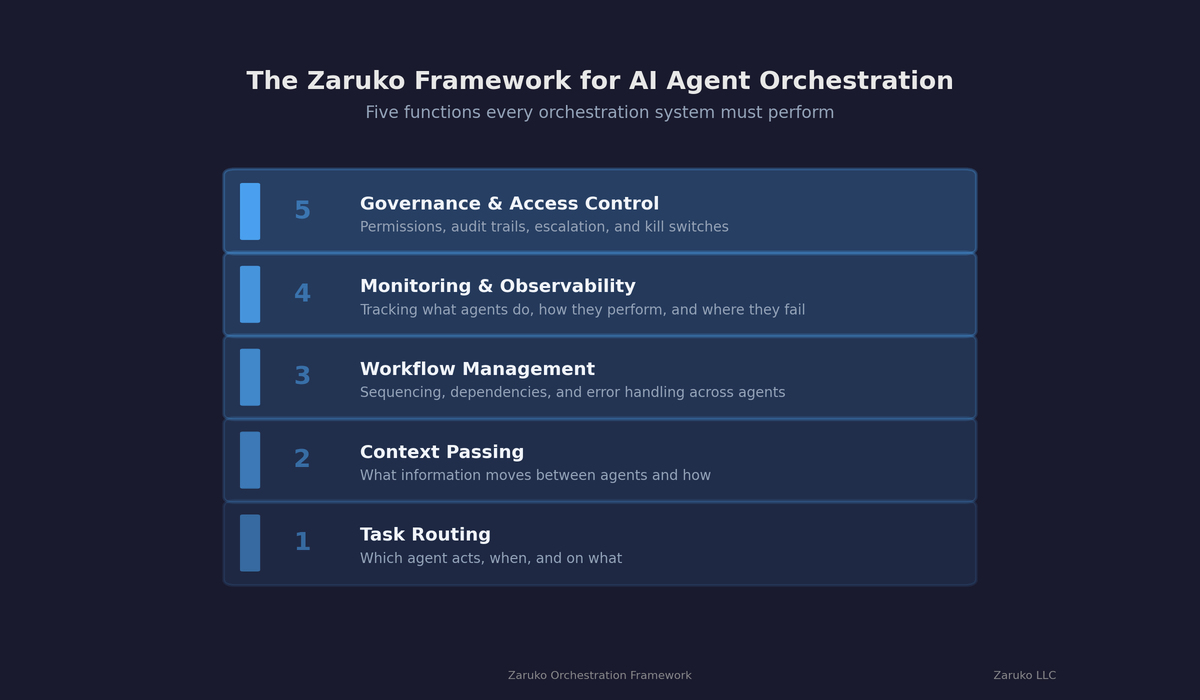

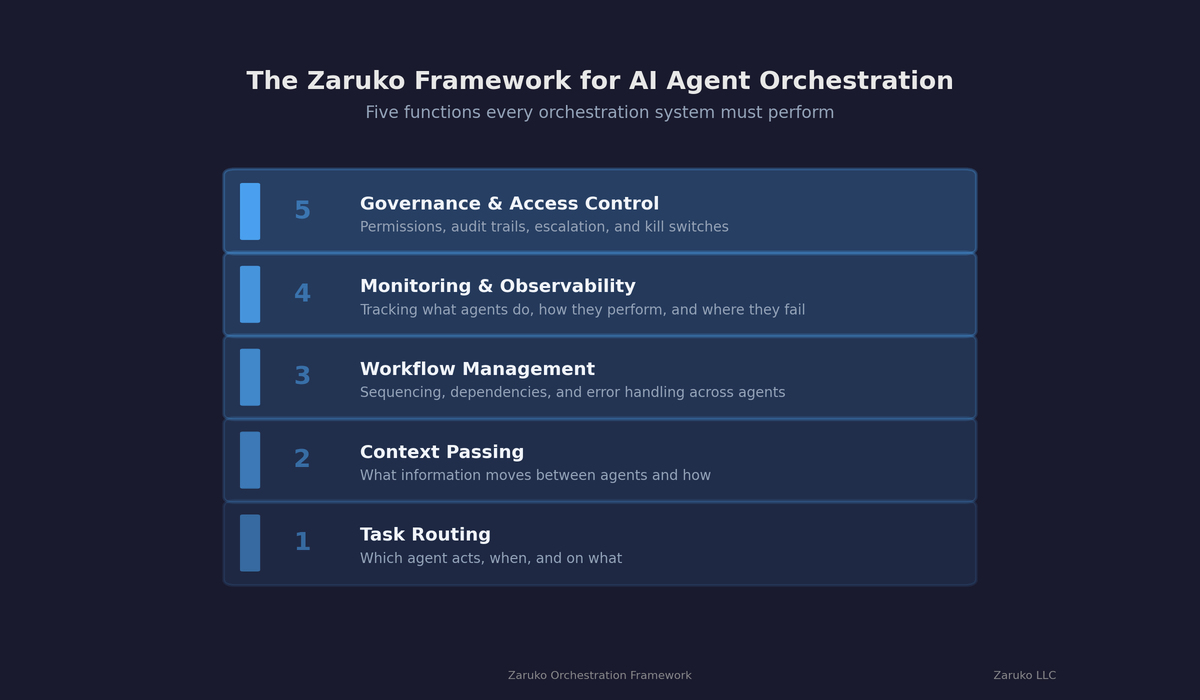

The Zaruko Framework for AI Agent Orchestration. Five core functions every orchestration layer must perform.

Task Routing and Assignment. This is the most basic function: deciding which agent handles which task. In a simple setup, routing is static. The AP agent always handles invoices, the support agent always handles tickets. In more sophisticated systems, routing is dynamic. The orchestrator evaluates the incoming task, assesses which agent is best suited based on current workload and capability, and assigns it accordingly. Gartner reported a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025.1 That surge is driven by organizations realizing that running multiple agents without intelligent routing creates exactly the kind of chaos they were trying to eliminate.

What to look for: Can the system route tasks dynamically based on context, or only through static rules? Can it reassign a task if the primary agent fails or is overloaded?

Context Passing and Shared Memory. This is where orchestration separates itself from old-school integration. Traditional integrations pass data between systems. Orchestration passes context. The difference is significant. Data is a record. Context is a record plus the reasoning about why it matters.

When an AP agent flags a suspicious invoice and passes it to a contract management agent, it does not just send the invoice data. It sends the anomaly it detected, the pattern it identified, and its confidence level. The contract agent picks up where the AP agent left off rather than starting from scratch. A CIO.com analysis noted that organizations are moving beyond traditional point-to-point integrations toward agent-to-agent communication, creating an abstraction layer that allows faster experimentation.2

Two emerging protocols are standardizing how this works. Anthropic's Model Context Protocol (MCP) is widely used as a standard way for agentic systems to connect to tools, databases, and APIs. Google's Agent-to-Agent Protocol (A2A) handles the horizontal connection: how agents find each other and communicate. MCP has seen explosive growth since its November 2024 launch, with a reported 97 million monthly SDK downloads as of late 2025 and backing from Anthropic, OpenAI, Google, Microsoft, and AWS.3 A2A, launched by Google with more than 50 industry partners, incorporated IBM's Agent Communication Protocol under the Linux Foundation in 2025.4 Both are now governed by vendor-neutral foundations.

For business leaders, the protocol details matter less than the principle: you want an orchestration layer that uses open standards, not proprietary connectors. Proprietary connectors are the new vendor lock-in.

What to look for: Does the platform support MCP and A2A? Does it use open standards or proprietary protocols? Can agents share context and reasoning, or just raw data?

Workflow Management and Sequencing. Agents need to execute in the right order, and that order is not always linear. Some tasks run in parallel. Some tasks depend on the output of a previous step. Some workflows need to branch based on what an agent discovers midstream.

The orchestration layer manages this sequencing. It maintains the state of the overall workflow, tracks which steps are complete, handles dependencies, and manages exceptions. When a logistics agent reports that a shipment is delayed, the orchestrator needs to know that the customer notification agent should be triggered immediately, but the billing adjustment agent should wait until the new delivery date is confirmed.

Analysts report that more than half of organizations can handle more volume and complexity when orchestration frameworks are in place.5 That capacity comes from the workflow engine handling complexity that would otherwise require human project management.

What to look for: Can workflows branch and run in parallel? Does the system maintain state across multi-step processes? How does it handle exceptions and failures mid-workflow?

Monitoring, Observability, and Telemetry. You cannot manage what you cannot see. When agents operate autonomously, you need real-time visibility into what they are doing, why they are doing it, and whether it is working.

This means logging every agent action, tracking the reasoning behind decisions, measuring performance against expected outcomes, and surfacing anomalies. The Cloud Security Alliance introduced the MAESTRO framework specifically for this purpose: a seven-layer approach to governing multi-agent environments that includes centralized logging, agent telemetry dashboards, and real-time visibility into which agent did what and why.6

Deloitte predicts that in 2026, the most advanced businesses will begin shifting toward "human-on-the-loop" orchestration, where humans monitor dashboards and intervene on exceptions rather than approving every action.7 That shift is only possible if the monitoring layer is thorough and trustworthy.

What to look for: Does the platform provide a single dashboard across all agents? Can you trace the full chain of actions and reasoning for any outcome? Does it alert on anomalies or only report after the fact?

Governance and Access Control. We will cover guardrails and human oversight in depth in the next post in this series. But governance is also a core function of the orchestration layer itself. Every orchestration system needs to enforce who can do what.

This includes role-based access control (which agents can access which systems and data), policy enforcement (business rules that constrain agent behavior), and escalation paths (conditions under which an agent must stop and involve a human). Gartner predicts that guardian agents, a new category of agent designed to govern other agents, will capture 10 to 15% of the agentic AI market by 2030.8

What to look for: Does the platform support role-based access control for agents? Can you define and enforce business rules centrally? Are escalation paths configurable without engineering support?

Platform Orchestration vs. Standalone Orchestration

The five functions above need to exist regardless of how you deploy agents. But where they live matters for your buying decision.

Platform-native orchestration is what you get from Salesforce Agentforce, Microsoft Copilot Studio, ServiceNow, and similar offerings. The orchestration is built into the platform. It works well within the platform's environment. The trade-off is that it typically does not extend well to agents outside that environment. If your best AP agent comes from a specialized vendor rather than Salesforce, the platform's orchestration layer may not support it, or may support it poorly.

Standalone orchestration comes from dedicated frameworks and platforms built specifically to coordinate agents across vendors and systems. These include open-source options like LangGraph, CrewAI, and AutoGen, as well as commercial platforms. They offer broader interoperability but require more technical investment to deploy and manage.

Hybrid approaches are emerging as a practical middle ground. Use your platform's native orchestration for agents within its environment, and add a standalone layer for cross-platform coordination. Third Stage Consulting's analysis of enterprise tech trends concluded that specialized apps have matured enough to out-innovate suite modules, and that AI and integration technology are reducing the penalty for running multiple systems.9 The orchestration layer is what makes that penalty manageable.

The Orchestration Market

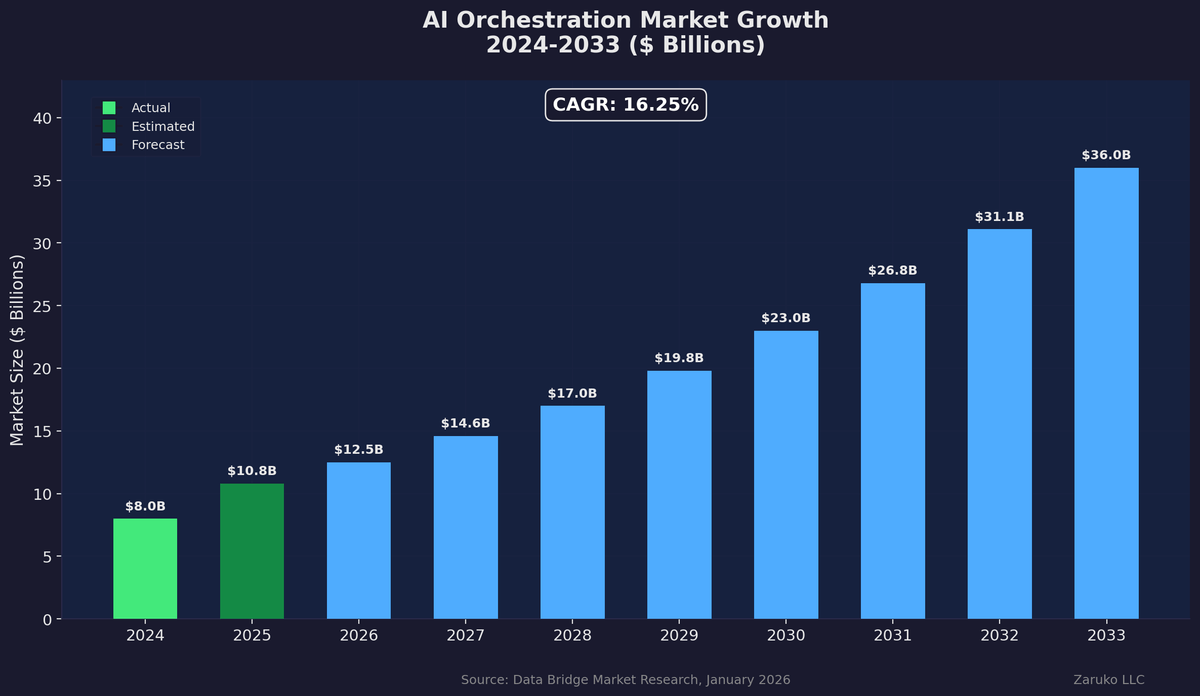

AI orchestration market growth, 2024-2033. Cloud-based orchestration holds 69% market share.

The orchestration market itself is growing fast. The AI orchestration market was valued at $10.8 billion in 2025 and is projected to reach $36 billion by 2033, growing at a compound annual rate of 16.25%.10 Cloud-based orchestration dominates, holding 69% market share in 2025, because the ability to scale and deploy rapidly is critical when your agent count is growing.

More than 80% of organizations now believe that AI agents are the new enterprise apps, triggering a reconsideration of investments in packaged applications.11 That reconsideration is fundamentally an orchestration question. Baseline agent capabilities are commoditizing faster than enterprise integration and governance. The orchestration of those agents is where competitive advantage will live.

One important caveat for anyone evaluating orchestration solutions today: this space is evolving fast. The leading open-source frameworks have gone through three generations in three years, moving from simple chaining to workflow orchestration to autonomous tool-calling harnesses. Each generation looked fundamentally different from the last. That pace of change means you should not over-invest in any single orchestration framework as a permanent choice. Choose solutions built on open standards (MCP, A2A) that give you the flexibility to evolve as the tooling matures. The orchestration layer you deploy in 2026 will not look like the one you run in 2028.

The Bottom Line

The orchestration layer is not a nice-to-have add-on. It is the infrastructure that determines whether your AI agent strategy delivers compound value or creates compound chaos. Orchestration is the difference between 10 agents that reduce cycle time and 10 agents that create compliance risk.

This is also the answer to the oldest objection against best-of-breed software: the integration tax. AI orchestration is eliminating the complexity cost that made single-vendor suites the safer bet for two decades. The integration tax is not gone, but it is dropping fast, and orchestration is what is driving it down.

When evaluating solutions, focus on the five core functions: task routing, context passing, workflow management, monitoring, and governance. Demand open standards over proprietary connectors. And understand that the choice between platform-native and standalone orchestration is not permanent. You can start with one and evolve to the other as your agent portfolio matures.

In our next post, we will go deeper on guardrails and human oversight, the governance framework that sits on top of the orchestration layer and ensures that autonomous agents remain accountable to the humans and businesses they serve.

Sources

- Gartner, "Multi-Agent System Inquiries," Q2 2025. Reported a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025. ↑

- CIO.com, "ERP in 2026: More AI, More Best-of-Breed Add-Ons," January 2026. Organizations moving toward agent-to-agent communication and abstraction layers. cio.com ↑

- Anthropic, MCP launch data; Deepak Gupta, "The Complete Guide to Model Context Protocol (MCP)," December 2025. MCP reached 97 million monthly SDK downloads as of late 2025 with backing from Anthropic, OpenAI, Google, Microsoft, and AWS. ↑

- The Register, "Deciphering the Alphabet Soup of Agentic AI Protocols," January 2026; Linux Foundation A2A Project announcement, June 2025. Google launched A2A with 50+ industry partners; IBM's Agent Communication Protocol incorporated under the Linux Foundation. ↑

- Industry analyst estimates citing Forrester research on orchestration adoption. More than half of organizations report handling more volume and complexity with orchestration frameworks in place. ↑

- CIO.com, "Taming Agent Sprawl: 3 Pillars of AI Orchestration," February 2026; Cloud Security Alliance, MAESTRO framework. Seven-layer approach to governing multi-agent environments. ↑

- Deloitte, "Unlocking Exponential Value with AI Agent Orchestration," November 2025. Predicts most advanced businesses will shift toward "human-on-the-loop" orchestration in 2026. deloitte.com ↑

- Gartner, "Guardian Agents Will Capture 10-15% of the Agentic AI Market by 2030," June 2025. Guardian agents as a new category designed to govern other agents. ↑

- Third Stage Consulting, "Top 10 Enterprise Tech Trends for 2026," December 2025. Specialized apps have matured enough to out-innovate suite modules; AI and integration technology reducing the penalty for multiple systems. thirdstage-consulting.com ↑

- Data Bridge Market Research, "Global AI Orchestration Market Report," January 2026. AI orchestration market valued at $10.8B in 2025, projected $36B by 2033 at 16.25% CAGR. ↑

- IDC, "Future Enterprise Resiliency & Spending (FERS) Survey," 2025. More than 80% of organizations believe AI agents are the new enterprise apps. ↑

Continue Reading

Where Your AI Agents Actually Run: Compute, Data, and Infrastructure Decisions

AI agents need compute, data access, and runtime environments. The infrastructure decisions you make now will shape cost, security, and vendor dependency for years.

AI Agents Are Bringing Back Point Solutions

AI agents are reversing the logic that drove platform consolidation. Here's why point solutions are back.

What's Actually Inside an AI Agent (And Why Most of It Isn't AI)

Most of an AI agent isn't AI. I built one to prove it. Here's the actual breakdown.

Agent Collaboration: Where the Value Lives, Where the Risk Hides

Multi-agent collaboration creates compound value, but complexity grows quadratically and security risks multiply with every handoff.

Evaluating AI agent orchestration for your business?

I help mid-market companies design the orchestration layer that makes AI agents work together safely and effectively. Let's talk.

Let's Talk