Agent Collaboration: Where the Value Lives, Where the Risk Hides

President, Zaruko

Table of Contents

This is the final post in our series on AI agents in the enterprise. In AI Agents Are Bringing Back Point Solutions, we made the case that AI agents are reversing the logic of platform consolidation. In The Orchestration Layer, we explained what coordinates them. In Guardrails and Human Oversight, we covered the governance that keeps multi-agent systems safe.

Now we get to the payoff and the warning.

The payoff: agent collaboration creates compound value that no single platform or individual agent can deliver. The warning: that same collaboration introduces complexity and data security risks that can undo the value if you do not plan for them.

What Makes Agent Collaboration Different

We covered this briefly in AI Agents Are Bringing Back Point Solutions, but it is worth expanding because it is the most original and least understood aspect of the AI agent shift.

Traditional software integrations move data between systems. An API call sends invoice data from your AP system to your general ledger. A webhook notifies your CRM when a support ticket is resolved. Data flows, but no reasoning happens at the boundaries.

Agent collaboration is different. When an AP agent flags a suspicious invoice and passes it to a contract management agent, it is not just sending data. It is sharing its analysis: the anomaly it detected, the pattern it recognized, and its confidence level. The contract agent picks up where the AP agent left off, applies its own domain expertise, and passes an enriched result to the next agent in the chain.

This is not integration. This is distributed reasoning across functional boundaries. The value comes from that cross-functional reasoning; the risk spikes when those handoffs also cross vendor and system trust boundaries. And it produces outcomes that would have required cross-departmental teams, multiple meetings, and days of elapsed time.

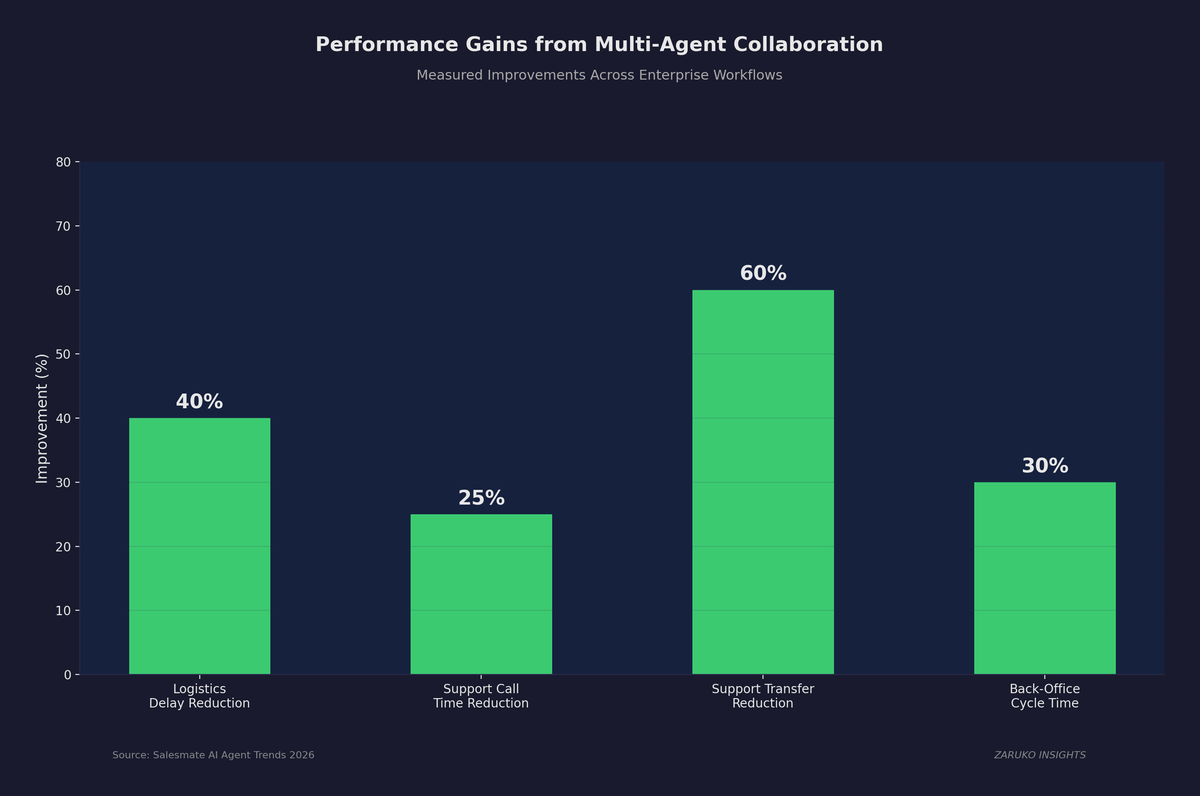

The early evidence supports the value claim. Vendor and practitioner reports suggest logistics teams using coordinated multi-agent systems have reduced delays by up to 40%, customer support organizations have cut call times by nearly 25% and transfers by up to 60%, and early adopters are seeing 20 to 30% faster workflow cycles with significant cost reductions in back-office operations.1

Where Compound Value Actually Emerges

Agent collaboration is most valuable in three specific patterns. Understanding these patterns helps you identify where to invest and where to hold off.

Cross-functional investigation

This is the finance example from AI Agents Are Bringing Back Point Solutions. An AP agent detects an anomaly. A contract agent checks the vendor agreement. A spend analytics agent pulls market comparables. Three agents, three domains, one coordinated investigation. The value here is speed and completeness. A human team would eventually reach the same conclusion, but it would take days instead of minutes and would require someone to recognize the problem, assign the work, and coordinate the handoffs.

Predictive chain reactions

A workforce planning agent notices attrition accelerating in engineering. A compensation benchmarking agent identifies that salaries have fallen below market. A recruiting agent adjusts job posting priority and salary ranges. The value here is not just speed. It is the ability to detect a problem, diagnose the root cause, and begin corrective action before a human even recognizes the pattern exists.

Continuous monitoring with escalation

A customer service agent detects a spike in complaints about late deliveries. A logistics agent traces the issue to a specific distribution center. A procurement agent checks carrier SLA compliance. The value here is that the monitoring never stops. Human teams sample and review. Agent networks can monitor every transaction, every interaction, every data point continuously and escalate only when the pattern warrants attention.

Accenture research found that companies with highly interoperable applications grew revenues approximately six times faster than their non-interoperable peers, capturing more than five points of incremental annual growth.2 The connection is inferential: if application interoperability drove 6x revenue growth, then agent interoperability, which is a form of application interoperability, should drive similar benefits. Agent collaboration is the next evolution of that interoperability advantage.

Vendor and practitioner reports show measurable gains across logistics, support, and back-office workflows when agents collaborate across functional boundaries.

The Complexity Trap

Here is where the warning starts.

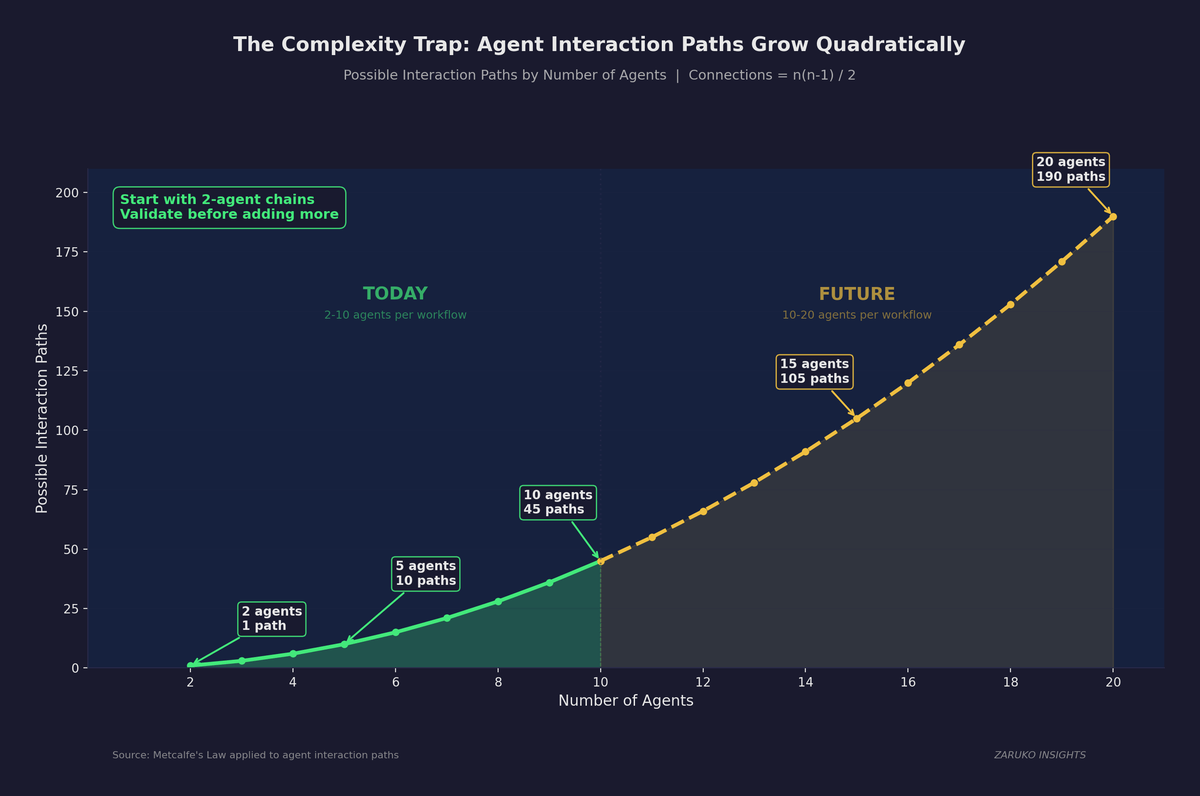

Every agent you add to a multi-agent system increases complexity in ways that are not linear. Two agents have one interaction path. Three agents have three. Five agents have ten. Ten agents have forty-five. This is the same math behind Metcalfe's Law, the principle that explains why networks become non-linearly more valuable (and more complex) as they grow. The number of unique pairwise connections equals n(n-1) / 2, where n is the number of agents. The growth is quadratic, meaning complexity increases with the square of the agent count, not in proportion to it.

Agent interaction paths grow quadratically. Most enterprise workflows today use 2 to 10 agents, but complexity escalates fast beyond that range.

This matters because debugging a multi-agent failure is fundamentally harder than debugging a single-agent failure. When the outcome is wrong, the problem could be in any agent, in the context passing between agents, in the routing logic, or in the interaction between two agents that were never designed to work together.

Gartner's projection that 40% of agentic AI projects could be canceled by 2027 is partly about this complexity.3 Organizations that deploy agents one at a time will hit a wall when the interaction effects between agents start producing unexpected results.

How to keep complexity manageable:

Start with two-agent chains before building larger networks. Validate that context passing works correctly between pairs of agents before adding a third. This is tedious but it prevents the cascading failures that kill multi-agent deployments.

Define clear boundaries for every agent. An agent should have a well-defined scope, well-defined inputs, and well-defined outputs. If you cannot describe in one sentence what an agent does and what it passes to the next agent, the scope is too broad.

Invest in observability before you invest in more agents. The monitoring and telemetry layer from The Orchestration Layer is not optional. You need to see every action, every context handoff, and every decision in real time. Teams commonly rely on tracing tools, guardrails, offline evaluations, and real-time testing to maintain control.4 These are not overhead. They are the infrastructure that makes scale possible.

Measure orchestration efficiency, not agent count. The CIO.com analysis from The Orchestration Layer introduced a useful metric: orchestration efficiency, defined as the ratio of successful multi-agent tasks completed to total compute cost.5 This is a better measure of progress than how many agents you have deployed.

The Data Security Problem

This is the risk that most organizations are not yet thinking about, and it may be the most consequential.

When agents collaborate across functional boundaries, they pass data between systems and vendors. An AP agent built by Vendor A passes invoice analysis to a contract management agent built by Vendor B, which shares its findings with a spend analytics agent built by Vendor C. Each handoff is a potential data exposure point.

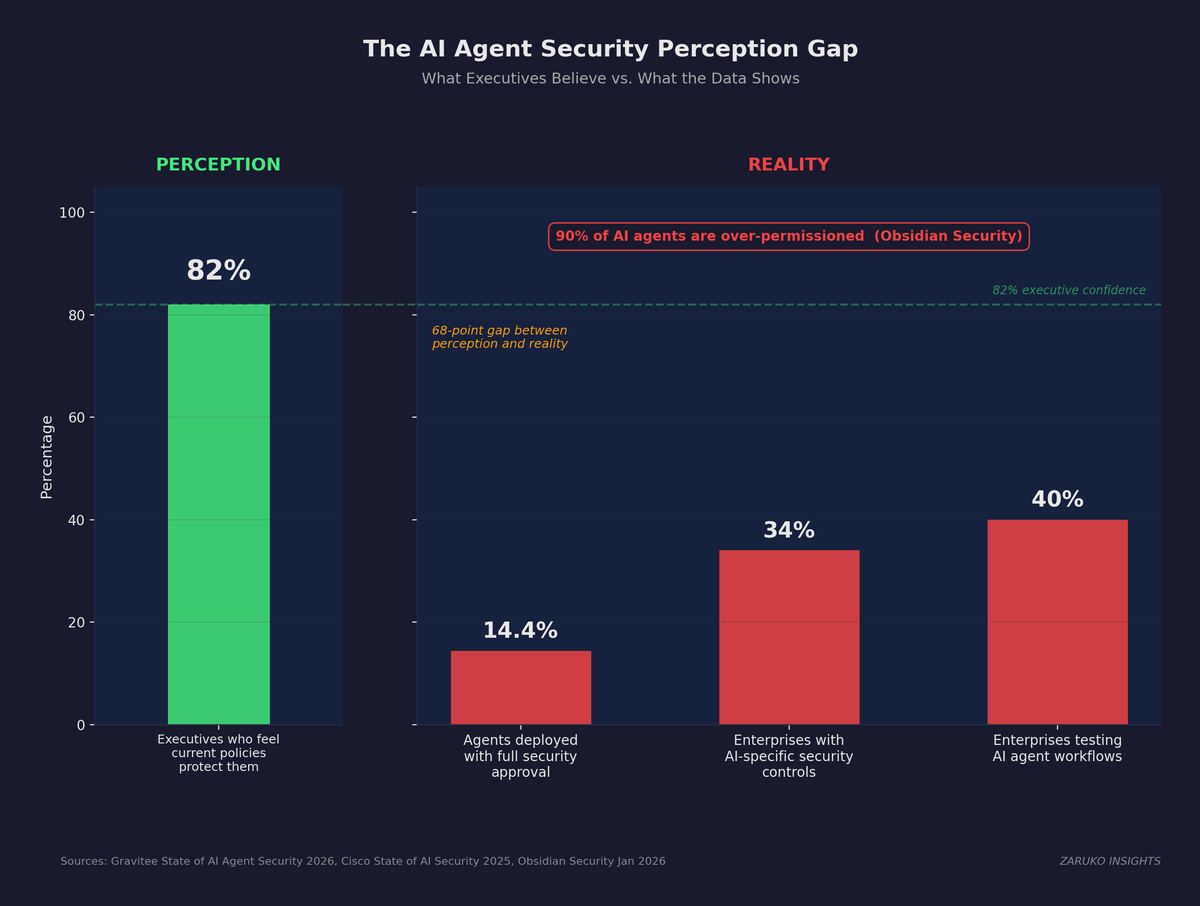

The numbers paint a stark picture. A Gravitee survey of over 900 executives and practitioners found that only 14.4% of organizations report that all AI agents go live with full security and IT approval.6 Meanwhile, 82% of executives feel confident their existing policies protect them from unauthorized agent actions. That gap between perception and reality is where breaches happen.

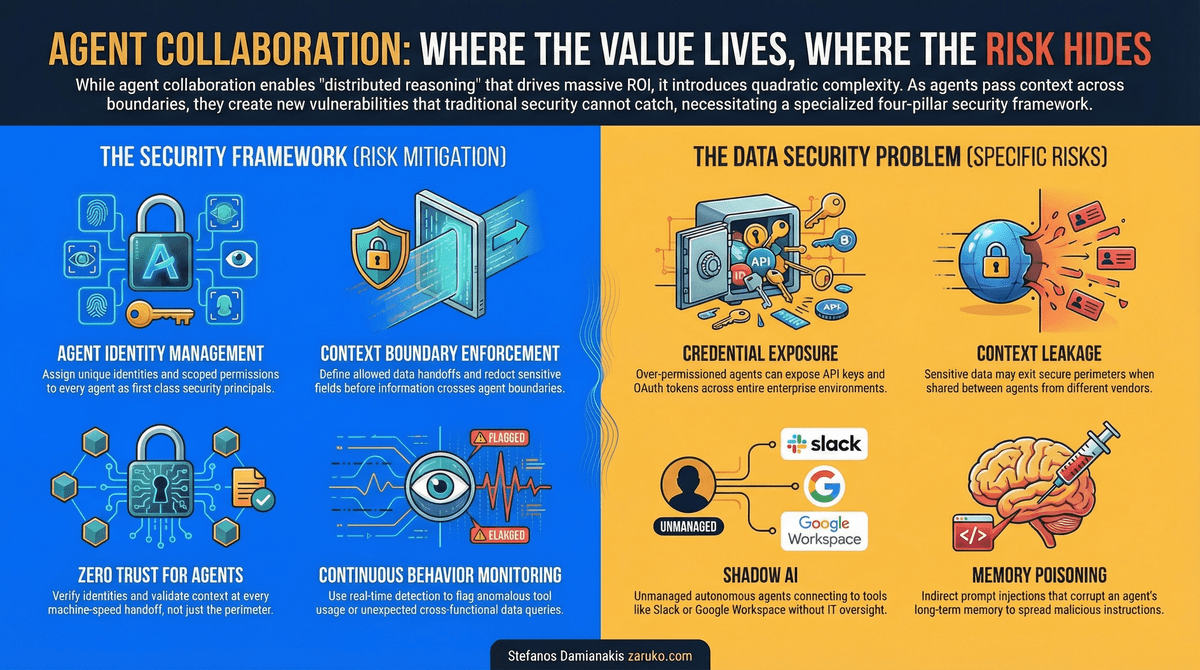

82% of executives believe their policies protect them, but only 14.4% of organizations deploy agents with full security approval. The 68-point gap is where breaches happen.

The Specific Risks

Credential exposure

AI agents hold API keys, OAuth tokens, and access credentials. Obsidian Security found that 90% of agents are over-permissioned, holding far more privileges than required for their function.7 A compromised agent does not just expose one system. It exposes every system that agent can access. In August 2025, a supply chain attack on Salesloft's Drift chatbot integration gave attackers access to Salesforce environments across what Obsidian Security estimated at more than 700 organizations. The threat actor, tracked as UNC6395, used stolen OAuth tokens to systematically export customer data, credentials, and API keys over a ten-day window before the tokens were revoked.8

Context leakage

The same context passing that makes agent collaboration valuable also creates data security risk. When an HR agent shares attrition data with a compensation agent, that data leaves the HR system's security perimeter. When a finance agent shares margin analysis with a procurement agent, that analysis may cross vendor boundaries. Every context handoff needs to be evaluated for data sensitivity, and the permissions for what data can be shared between agents need to be as carefully designed as the permissions for what data agents can access.

Shadow AI

The OpenClaw exposure in early 2026 revealed what happens when employees connect autonomous agents to corporate systems without IT oversight. Researchers discovered tens of thousands of exposed instances (Censys counted 21,639 as of January 31, 2026), with agents connected to Slack, Google Workspace, and other enterprise tools.9 This is the agentic version of shadow IT, and it is harder to detect because the agents can operate quietly in the background.

Memory poisoning

This is an emerging risk. Palo Alto Networks' Unit 42 demonstrated that attackers can inject false instructions into an agent's long-term memory through indirect prompt injection. In their proof of concept, a malicious webpage corrupted an agent's session memory, and those instructions persisted across future sessions without the user's knowledge.10 In a multi-agent system, a single poisoned agent can corrupt the context it passes to downstream agents, spreading bad data through the entire chain.

A Practical Framework for Data Security in Multi-Agent Systems

The security model for multi-agent systems needs four elements that go beyond traditional application security.

Agent identity management. Every agent needs a unique identity with defined permissions, just like every human employee. Treat agents as first-class security principals, not extensions of the human who deployed them. This means unique credentials, defined access scopes, and activity logging tied to the agent identity.

Context boundary enforcement. Define what data can cross which boundaries. Not every agent needs to see every piece of context from the previous agent in the chain. Strip sensitive fields before passing context. Encrypt data in transit between agents. Log every context handoff.

Zero trust for agents. Assume every agent interaction could be compromised. Verify identity, check permissions, and validate context at every handoff, not just at the system perimeter. Traditional firewalls and role-based access control were designed for human users operating through known interfaces. Agents operate at machine speed through APIs, and they need security controls that operate inside their decision loop.

Continuous monitoring for anomalous agent behavior. Normal agent behavior follows predictable patterns. An AP agent that suddenly starts querying employee salary data is anomalous. A customer service agent that starts accessing financial systems is anomalous. Detection systems need to learn normal patterns and flag deviations in real time.

Where to start Monday morning: Inventory every agent and its permissions. Enforce per-agent identity with scoped tokens. Redact or transform sensitive fields at every context handoff. Log every agent action and every inter-agent data transfer. Set up anomaly detection on agent tool usage patterns.

The Bottom Line

Agent collaboration is where the real value of multi-agent systems lives. The ability to coordinate specialized agents across functional boundaries, producing compound outcomes that no single system could deliver, is the reason the point solution model is viable again.

But that value comes with real costs. Complexity grows with every agent you add. Data security risks multiply with every context handoff. And the governance layer from Guardrails and Human Oversight becomes more critical, not less, as the agent network expands.

The organizations that capture the most value from AI agents will be the ones that treat complexity and security as first-order concerns, not afterthoughts. They will start small, measure carefully, invest in observability, and build governance before they build scale.

The point solution pendulum is swinging back. The orchestration layer makes it possible. Guardrails make it safe. And agent collaboration makes it valuable. But only if you build the system around the agents, not just the agents themselves.

- Salesmate, "AI Agent Trends for 2026: 7 Shifts to Watch," citing logistics, support, and back-office operations data. ↑

- Accenture, "Value Untangled: Accelerating Radical Growth Through Interoperability," November 2022. Survey of 4,000+ C-suite executives across 19 industries. ↑

- Gartner, "Gartner Predicts Over 40% of Agentic AI Projects Will Be Canceled by End of 2027," June 2025. ↑

- Master of Code Global, "150+ AI Agent Statistics [2026]," February 2026, citing LangChain data on observability tools. ↑

- CIO.com, "Taming Agent Sprawl: 3 Pillars of AI Orchestration," February 2026. ↑

- Gravitee, "State of AI Agent Security 2026 Report," February 2026. Survey of 919 executives and practitioners. ↑

- Obsidian Security, "The 2025 AI Agent Security Landscape," January 2026. ↑

- Google Threat Intelligence Group (Mandiant), "Widespread Data Theft Targets Salesforce Instances via Salesloft Drift," August 2025. ↑

- Reco, "AI and Cloud Security Breaches: 2025 Year in Review," December 2025. OpenClaw exposure data corroborated by Censys (21,000+ instances, January 2026). ↑

- Palo Alto Networks Unit 42, "When AI Remembers Too Much: Persistent Behaviors in Agents' Memory," October 2025. ↑

Continue Reading

AI Agents Are Bringing Back Point Solutions. This Time, It Might Actually Work.

AI agents are reversing the logic that drove platform consolidation. Here's why point solutions are back.

The Orchestration Layer: What It Is, What It Does, and What to Look For

The five core functions every orchestration layer must perform.

Guardrails and Human Oversight: The Governance Layer that Makes AI Agents Safe

The five governance components every organization needs before scaling AI agents.

Planning a multi-agent deployment?

I help mid-market companies design agent collaboration that captures compound value without the complexity blowup. Let's talk.

Let's Talk