Guardrails and Human Oversight: The Governance Layer that Makes AI Agents Safe

President, Zaruko

Table of Contents

In our previous post, The Orchestration Layer, we broke down the five core functions that make multiple AI agents work together. Task routing, context passing, workflow management, monitoring, and governance.

We deliberately kept that last one brief. Governance deserves its own treatment because it is the function most likely to determine whether your AI agent investment succeeds or fails.

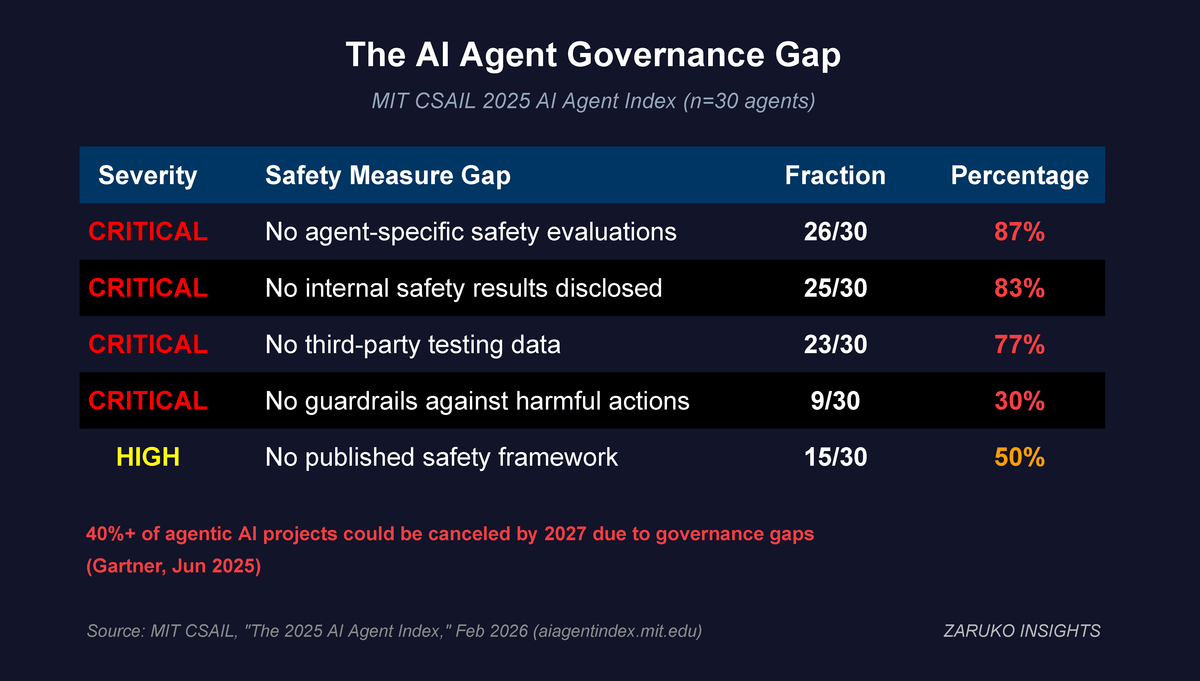

Here is why: Gartner projects that more than 40% of agentic AI projects will be canceled by 2027 due to escalating costs, unclear business value, or inadequate risk controls.1 The agents themselves are not the problem. The governance around them is. MIT's Computer Science and AI Laboratory published its 2025 AI Agent Index in February 2026, examining 30 of the most widely deployed agents. The findings should concern any business leader considering deployment. Half of all agents had no published safety framework. Nine of 30 had no documented guardrails against potentially harmful actions. Only four provided agent-specific safety evaluations.2

This is not a maturity problem that will solve itself. It is a structural gap that organizations need to fill before they scale.

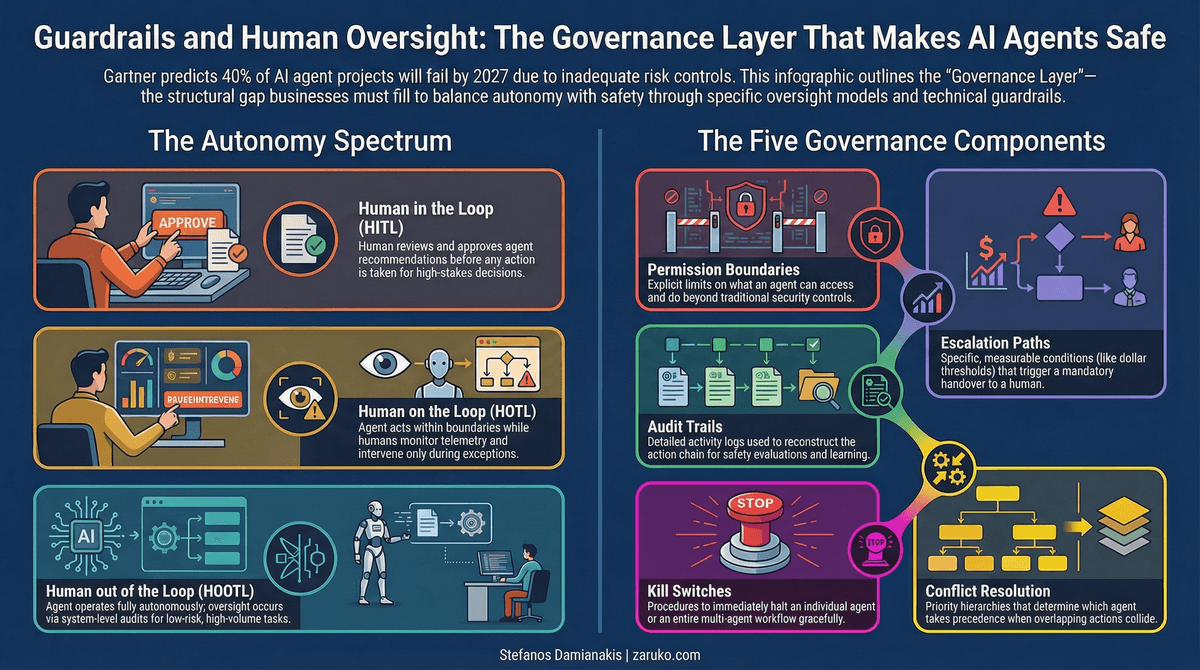

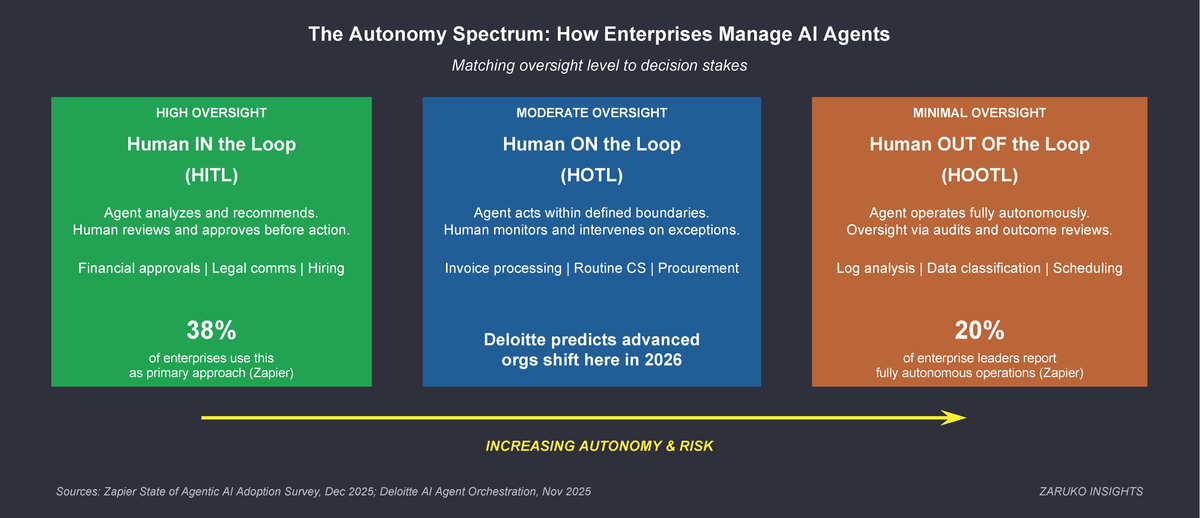

The Autonomy Spectrum

Not every agent needs the same level of oversight. The right governance model depends on what the agent is doing and what is at stake if it gets it wrong.

The autonomy spectrum maps to three models that every business leader should understand.

Human in the loop (HITL)

The agent does the analysis, drafts the recommendation, or prepares the output. A human reviews and approves before anything happens. This is the right model for high-stakes decisions: financial approvals above a threshold, customer communications that could create legal exposure, hiring and compensation changes. The Zapier State of Agentic AI survey found that human-in-the-loop remains the most common approach to agent management, used by 38% of enterprise organizations.3

Human on the loop (HOTL)

The agent acts autonomously within defined boundaries. A human monitors the results through dashboards and telemetry and intervenes only when something falls outside expected parameters. This is the right model for medium-stakes operations: invoice processing within normal ranges, routine customer service responses, standard procurement workflows. Deloitte predicts that in 2026, the most advanced organizations will begin shifting toward this model as their monitoring capabilities mature.4

Human out of the loop (HOOTL)

The agent operates fully autonomously. No human reviews individual actions. Oversight happens at the system level through audits, outcome analysis, and periodic reviews. This model is appropriate only for well-understood, low-risk, high-volume tasks where the cost of occasional errors is low: log analysis, routine data classification, basic scheduling. Only 20% of enterprise leaders report their AI systems currently operate autonomously with minimal oversight.3

The tradeoff is direct: more human oversight means higher labor cost per transaction but lower risk exposure. Less oversight means higher throughput but requires tight task boundaries and tolerance for occasional errors.

The mistake most organizations make is treating all agents the same. Either everything requires human approval (which defeats the purpose of automation) or everything runs autonomously (which creates unacceptable risk). The governance layer needs to enforce different oversight models for different agents and different tasks.

The three oversight models map agent autonomy to human involvement. Higher-stakes decisions require more direct human control.

The Five Governance Components

Guardrails for multi-agent systems need five components working together. Missing any one of them creates a gap that will eventually produce a failure.

Permission boundaries

Every agent needs clearly defined limits on what it can access and what it can do. This goes beyond traditional role-based access control. An AP agent should be able to read invoice data and flag anomalies, but it should not be able to approve payments above a certain threshold or access employee compensation data. The Cisco State of AI Security 2025 Report found that only 34% of enterprises have AI-specific security controls in place, and fewer than 40% conduct regular security testing on AI agent workflows.5 That means most organizations are running agents with broader permissions than they need or intend.

Escalation paths

Every agent needs defined conditions under which it stops acting and involves a human. These conditions should be specific and measurable: dollar thresholds, confidence levels, anomaly scores, exceptions to established patterns. Vague guidelines like "escalate when appropriate" are not escalation paths. They are gaps.

Audit trails

Every action an agent takes needs to be logged with enough detail to reconstruct the full chain of reasoning. This is not just for compliance. It is for learning. When an agent makes a mistake, the audit trail tells you whether the problem was bad data, a flawed rule, an edge case the agent was not designed to handle, or an interaction between agents that produced an unexpected result. MIT found that 23 of 30 agents they evaluated offer no third-party testing information on safety.2 Without audit trails, you cannot evaluate whether your agents are operating safely even if you wanted to.

Kill switches

Every agent needs the ability to be stopped immediately, and every multi-agent workflow needs the ability to be halted as a system. This sounds obvious, but in practice many deployments lack clean shutdown procedures. When agents are chained together, stopping one agent midstream can leave the others in undefined states. The governance layer needs to handle graceful degradation, not just emergency stops.

Conflict resolution

When multiple agents operate across overlapping domains, they will eventually produce conflicting actions. A cost optimization agent might recommend cutting a vendor contract that a supply chain agent depends on. A marketing agent might offer a promotion that a margin protection agent would reject. The governance layer resolves these conflicts by enforcing priority hierarchies and business rules that determine which agent's recommendation takes precedence. For example: margin protection overrides marketing promotions unless an inventory surplus flag is active. The orchestration layer from The Orchestration Layer routes tasks and passes context. The governance layer decides what happens when those tasks collide.

No agent should reach production until permissions, escalation criteria, logging schema, and kill-switch ownership are documented and assigned.

MIT CSAIL found critical governance gaps across 30 of the most widely deployed AI agents. Most lack basic safety documentation.

Guardian Agents: Governance Is Becoming Its Own Category

The governance problem is significant enough that it is producing its own class of solutions. Gartner predicts that guardian agents, a new category of AI designed specifically to govern other agents, will capture 10 to 15% of the agentic AI market by 2030.6

Guardian agents operate as a supervision layer. They monitor other agents in real time, check actions against policy rules before execution, flag anomalies, and enforce escalation. They are, in effect, agents whose entire job is to make sure other agents stay within bounds.

This is worth paying attention to because it signals a market shift. Governance is moving from a feature inside platforms to a standalone function with its own vendors, its own market, and its own evaluation criteria. Organizations that treat governance as someone else's problem (the platform vendor's, the IT team's, an afterthought) will find themselves exposed as the agent count grows.

Where Humans Sit in the System

The hardest governance question is not technical. It is organizational. Who is responsible when an agent makes a decision that harms the business?

If an AP agent approves a fraudulent invoice because it fell within normal parameters, who owns that loss? The finance team that deployed the agent? The IT team that configured its permissions? The vendor that built it? The orchestration layer that routed the invoice to the agent?

These questions do not have universal answers, but they need to have specific answers in your organization before you deploy. The governance layer should make accountability explicit at every level. Every agent action should map to a human or team that is accountable for the outcomes in that domain.

Deloitte's research found that 86% of chief human resources officers see integrating digital labor as central to their role.4 That integration requires defining new relationships between human workers and AI agents. Humans are not being replaced by agents. They are being repositioned as supervisors, auditors, and exception handlers. That is a fundamentally different job description, and most organizations have not updated their structures to reflect it.

The Bottom Line

Guardrails are not a feature you add after deployment. They are the governance infrastructure you build before your second agent goes live.

The organizations that will succeed with AI agents are not the ones that deploy the fastest. They are the ones that know exactly where humans sit in every workflow, what every agent is allowed to do, what happens when things go wrong, and who is accountable for the outcomes.

- Gartner, "Gartner Predicts Over 40% of Agentic AI Projects Will Be Canceled by End of 2027," June 2025. ↑

- MIT CSAIL, "The 2025 AI Agent Index: Documenting Technical and Safety Features of Deployed Agentic AI Systems," February 2026. Half of agents lack published safety frameworks; 9/30 have no guardrails documentation; only 4 provide agent-specific safety evaluations; 23/30 have no third-party testing data. ↑

- Zapier, "State of Agentic AI Adoption Survey," December 2025. HITL used by 38% of enterprises; 20% operate autonomously with minimal oversight. ↑

- Deloitte, "Unlocking Exponential Value with AI Agent Orchestration," November 2025. 86% of CHROs see integrating digital labor as central to their role. Predicts advanced organizations will shift toward human-on-the-loop in 2026. ↑

- Cisco, "State of AI Security 2025 Report," 2025. Only 34% of enterprises have AI-specific security controls; fewer than 40% conduct regular security testing on AI agent workflows. ↑

- Gartner, "Gartner Predicts that Guardian Agents Will Capture 10-15% of the Agentic AI Market by 2030," June 2025. ↑

Continue Reading

The Orchestration Layer: What It Is, What It Does, and What to Look For

The five core functions every orchestration layer must perform.

AI Orchestration Without Visibility Creates Hidden Bottlenecks

Orchestration dashboards measure what agents produce. They rarely measure the exceptions that land on humans downstream.

The Software Feature No One Asked For (But AI Agents Will Force)

AI agents break traditional permission models. Why role-based access control fails at machine speed.

Building governance for your AI agents?

I help mid-market companies design the guardrails and oversight models that make AI agents safe to scale. Let's talk.

Let's Talk