Your AI Pilot Worked. That's the Worst Thing That Could Have Happened.

President, Zaruko

Table of Contents

The pilot worked.

Three months of testing. A clear improvement in processing time. Happy stakeholders. A green light to scale.

Six months later, the project is dead. The board wants to know what happened.

Here's what happened: the pilot was designed to succeed. Nobody designed it to fail. And the distance between a successful pilot and a working production system is where most AI investments go to die.

There are three kinds of pilot success, and only one of them matters. Demo success means it looked good. Task success means it worked on a curated slice of data. Business success means it moved a constraint and survived operations. Most pilots prove the model can do the task. They don't prove the system can run the business.

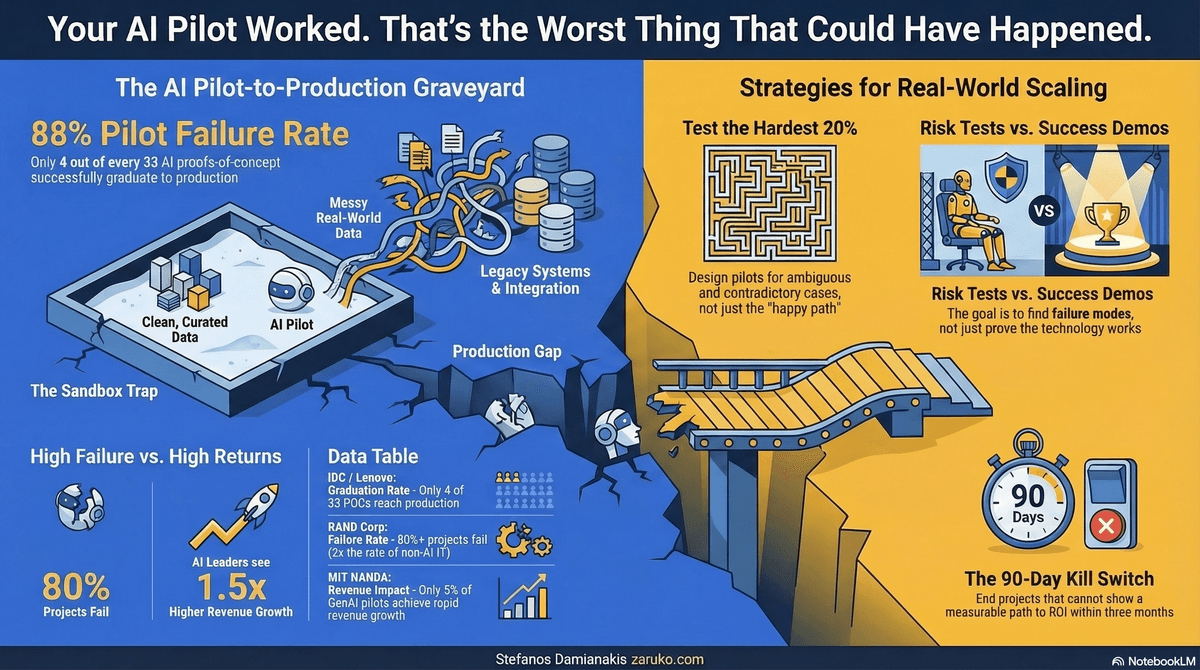

The Graveyard Is Full of Successful Pilots

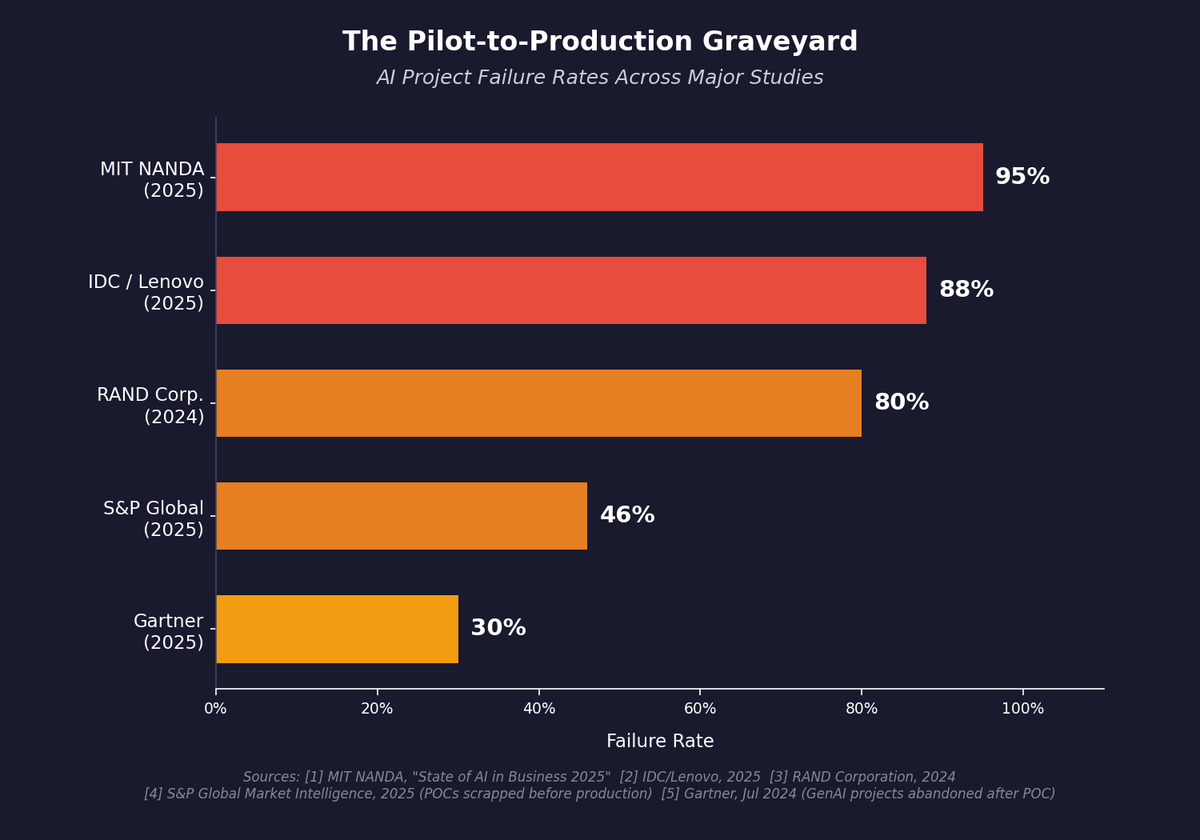

The numbers on this are brutal.

An MIT NANDA report combining a review of 300 publicly disclosed AI initiatives, interviews with 52 organizations, and survey responses from 153 senior leaders found that only 5% of generative AI pilot programs achieve rapid revenue acceleration.1 The rest stall, delivering little to no measurable impact on P&L.

IDC found that for every 33 AI proofs-of-concept a company launches, only 4 graduate to production. That's an 88% failure rate.2

S&P Global surveyed over 1,000 enterprises and found that 42% of companies abandoned most of their AI initiatives in 2025, up from 17% in 2024. The average organization scrapped 46% of its AI proofs-of-concept before they reached production.3

RAND Corporation puts the overall AI project failure rate at over 80%, which is twice the failure rate of non-AI technology projects.5

These are not small-sample anecdotes. This is the industry pattern.

The Pilot-to-Production Graveyard: AI project failure rates range from 30% to 95% across five major studies.

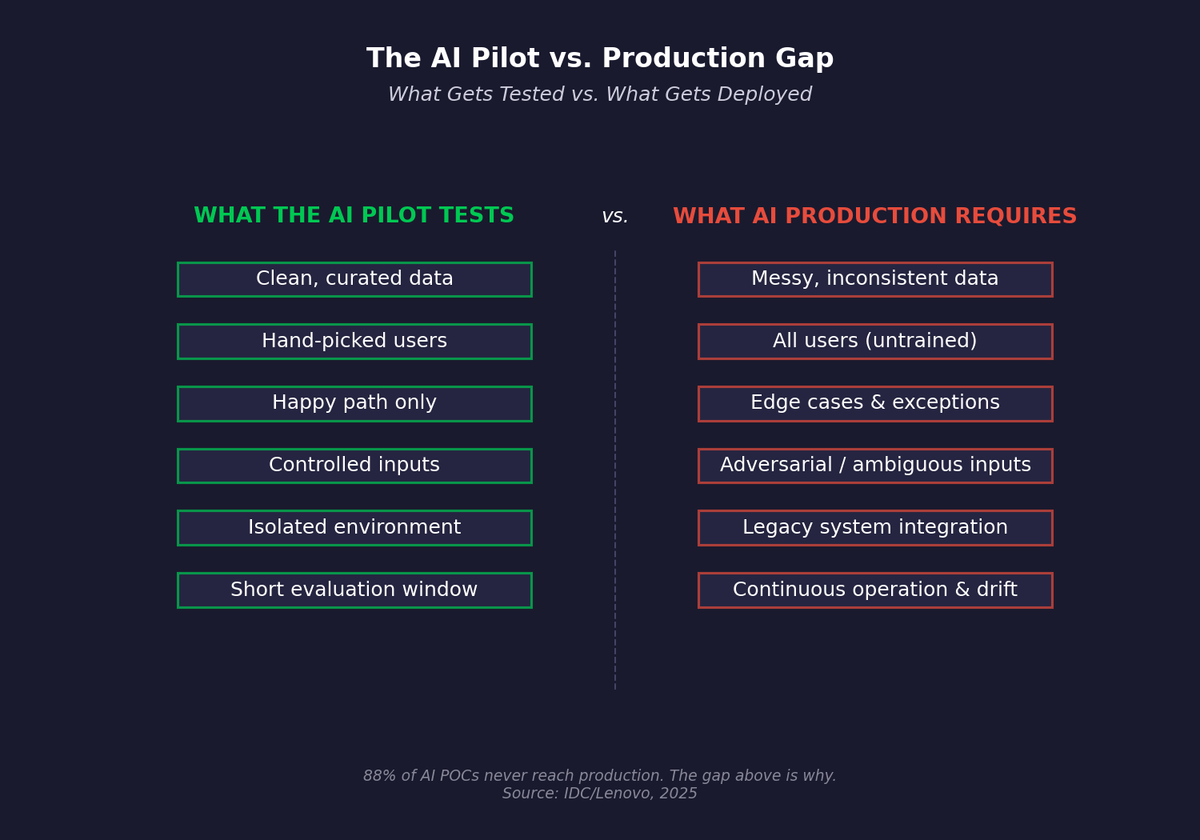

Why Pilots Succeed and Production Fails

A pilot runs in a sandbox. Clean data. Controlled inputs. Selected users who are motivated to make it work. The hardest edge cases are quietly excluded or handled manually.

Production means: messy data from systems that haven't been cleaned in years. Edge cases that nobody anticipated. Users who weren't part of the pilot and don't understand the tool's limitations. Integration with legacy systems that predate the current CTO.

The pilot measures what's easy to measure: speed, volume, user satisfaction from a hand-picked group. It does not measure what matters: accuracy on the hardest 20% of cases, downstream impact on adjacent workflows, total cost of ownership including ongoing maintenance and retraining.

The AI Pilot vs. Production Gap: what gets tested in the pilot bears little resemblance to what production actually requires.

McKinsey's 2025 AI survey found that organizations reporting significant financial returns from AI are nearly three times as likely to have fundamentally redesigned workflows before selecting their modeling approach.6 Most companies do this backwards. They pick the technology, run a pilot, and then try to figure out how to fit it into their existing processes.

The Four Failure Patterns

After looking at the research and talking to operators who have been through this cycle, four patterns keep repeating.

Pilot paralysis. The technology works in isolation. But integration requirements (authentication, compliance workflows, user training) go unaddressed until someone asks for a go-live date. At that point, the gap between "working demo" and "production system" turns out to be 6 to 12 months of engineering work that nobody budgeted for.

Model obsession. Engineering teams spend quarters optimizing model performance metrics while integration and deployment tasks sit in the backlog. When the project surfaces for business review, the compliance requirements look insurmountable and the business case is still theoretical.

The measurement trap. The pilot proves the AI can do the task faster. Nobody measured whether faster matters. If the bottleneck is downstream approval, or data quality, or human judgment on exceptions, then automating the fast part doesn't change the outcome. You sped up the wrong thing. If you didn't move the constraint, you didn't create ROI.

No ownership after launch. Successful teams assign product managers to AI systems with explicit service-level commitments. Failed teams treat the pilot as a research project that ends when the demo is done. Nobody owns version 1.1. Nobody monitors for performance drift. Nobody retrains the model when the data changes.

What Actually Works

The companies that successfully scale AI from pilot to production share a few characteristics.

They frame pilots as risk tests, not success demos. The goal is to find failure modes, not to prove the technology works. If your pilot only tests the happy path, it hasn't told you anything useful about production.

They design the pilot around the hardest 20% of cases, not the easiest 80%. Anyone can automate the simple stuff. The question is what happens when the input is ambiguous, incomplete, or contradictory.

They include production constraints from day one. Real data quality. Real integration points. Real user skill levels. If the pilot uses a curated dataset that doesn't exist in production, the pilot is fiction.

They measure in dollars, not demos. "It's faster" is not ROI. "It saved X hours at Y fully-loaded cost per hour, net of the cost to build, deploy, and maintain the system" is ROI.

BCG's research shows that AI leaders achieve 1.5x higher revenue growth and 1.6x greater shareholder returns than laggards.7 The difference is not better models. It is better implementation discipline.

The 90-Day Rule

Set a kill switch. If you can't show a measurable path to ROI within 90 days, with defined inputs, owners, an integration plan, and unit economics, kill it. Don't let it become a zombie project that consumes resources, generates optimistic status reports, and never ships.

A pilot that only proves the technology works in perfect conditions is not a proof of concept. It is a proof of marketing.

The real proof is whether it survives contact with your actual business.

Sources

- MIT NANDA Initiative, "The GenAI Divide: State of AI in Business 2025" (5% of GenAI pilots achieve rapid revenue acceleration). ↑

- IDC/Lenovo research, 2025 (4 of 33 POCs reach production; 88% failure rate). CIO ↑

- S&P Global Market Intelligence, 2025 (42% of companies abandoned most AI initiatives; 46% of POCs scrapped). CIO Dive ↑

- Gartner, July 2024 (30% of GenAI projects abandoned after POC by end of 2025). Gartner

- RAND Corporation, 2024 (80%+ AI project failure rate, 2x non-AI IT projects). RAND ↑

- McKinsey, "The State of AI in 2025" (high performers nearly 3x as likely to fundamentally redesign workflows). ↑

- BCG, "The Widening AI Value Gap," September 2025 (AI leaders: 1.5x revenue growth, 1.6x shareholder returns). ↑

Continue Reading

Before You Bet 90 Days on AI: An Operator's Scoping Checklist

Most AI projects fail because they're scoped like technology rollouts, not process experiments.

The Simple Rule that Separates AI Winners from Everyone Else

One rule separates companies getting ROI from AI and companies burning money on it.

74% Want Revenue from AI. 20% Are Getting It.

88% of companies have adopted AI. Fewer than 40% can point to a financial result.

Stuck between a successful pilot and production?

I help mid-market companies close the gap between AI demos and systems that actually run the business. Let's talk about what's really blocking your rollout.

Let's Talk