What the 5% Who Succeed with AI Actually Do Differently

President, Zaruko

Table of Contents

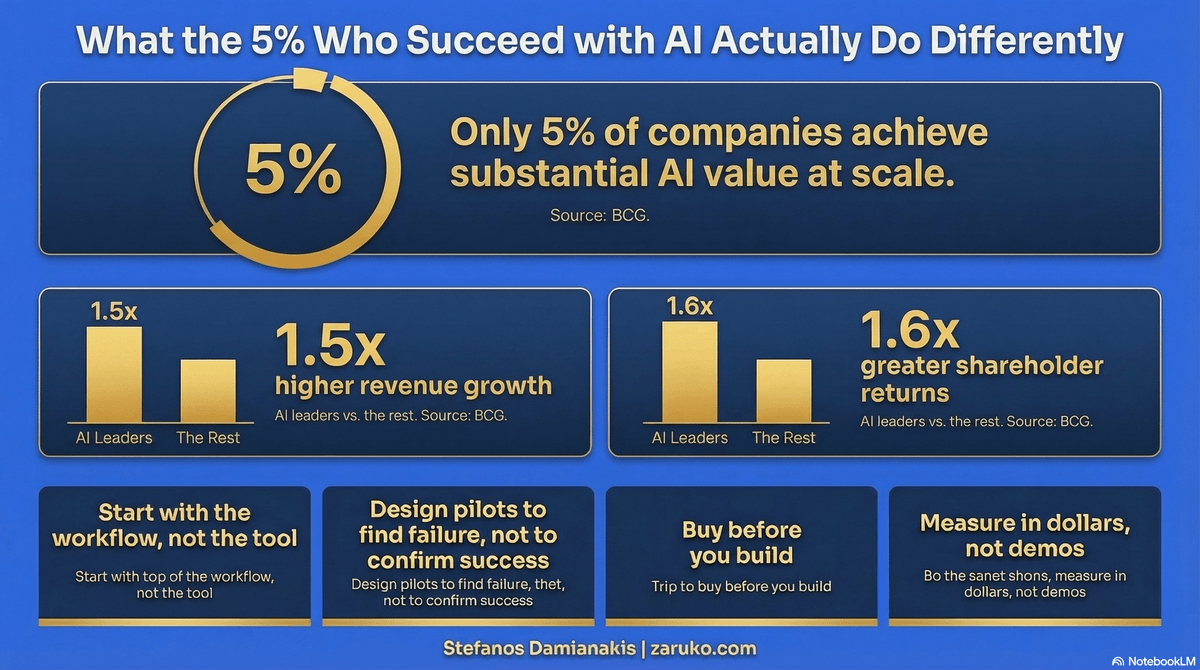

MIT studied 300 enterprise AI deployments and found that 5% achieved rapid revenue acceleration. The other 95% stalled.1

BCG surveyed hundreds of companies and found that only 5% create substantial value from AI at scale. The other 95% generate "hardly any material value."2

Different studies. Same number. Same conclusion.

Most companies fail with AI. A small group succeeds. The difference is not better technology, bigger budgets, or smarter data scientists. It is a set of decisions that the winners make before they write a single line of code.

They Start with the Workflow, Not the Technology

McKinsey's 2025 AI survey found that organizations reporting significant financial returns from AI are nearly three times as likely to have fundamentally redesigned workflows before selecting their modeling approach.3

Most companies do this backwards. They see a demo. They pick a tool. They run a pilot. Then they try to figure out how to fit it into their existing processes.

The 5% start with a different question: Which workflow costs us the most, has the most manual steps, and has the clearest success criteria? That becomes the AI project. Not the most interesting problem. Not the one that impressed the CEO in a vendor demo. The one where the math works.

This is not a technology decision. It is an operations decision. And the companies that treat it as such are the ones that get results.

They Design Pilots to Fail, Not to Succeed

The standard pilot is designed to prove the technology works. Clean data. Selected users. Controlled conditions. The happy path.

The 5% design pilots to find failure modes. They test with messy data from production systems. They include the hardest 20% of cases, not just the easiest 80%. They put the tool in front of users who weren't part of the selection process and don't understand its limitations.

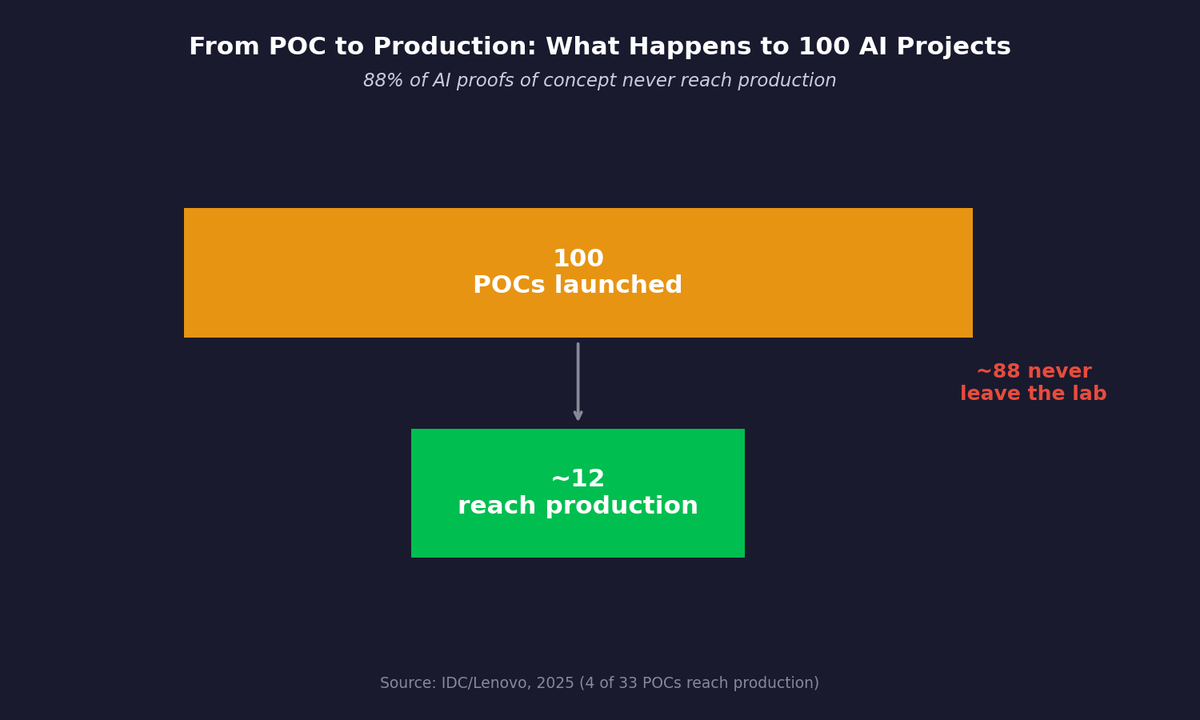

IDC found that for every 33 AI proofs of concept a company launches, only 4 reach production.4 The gap is not that the technology doesn't work in the lab. It's that nobody tested what happens when it leaves the lab.

A pilot that only succeeds in controlled conditions has not told you anything useful about production. It has told you the vendor's demo is good.

They Buy Before They Build

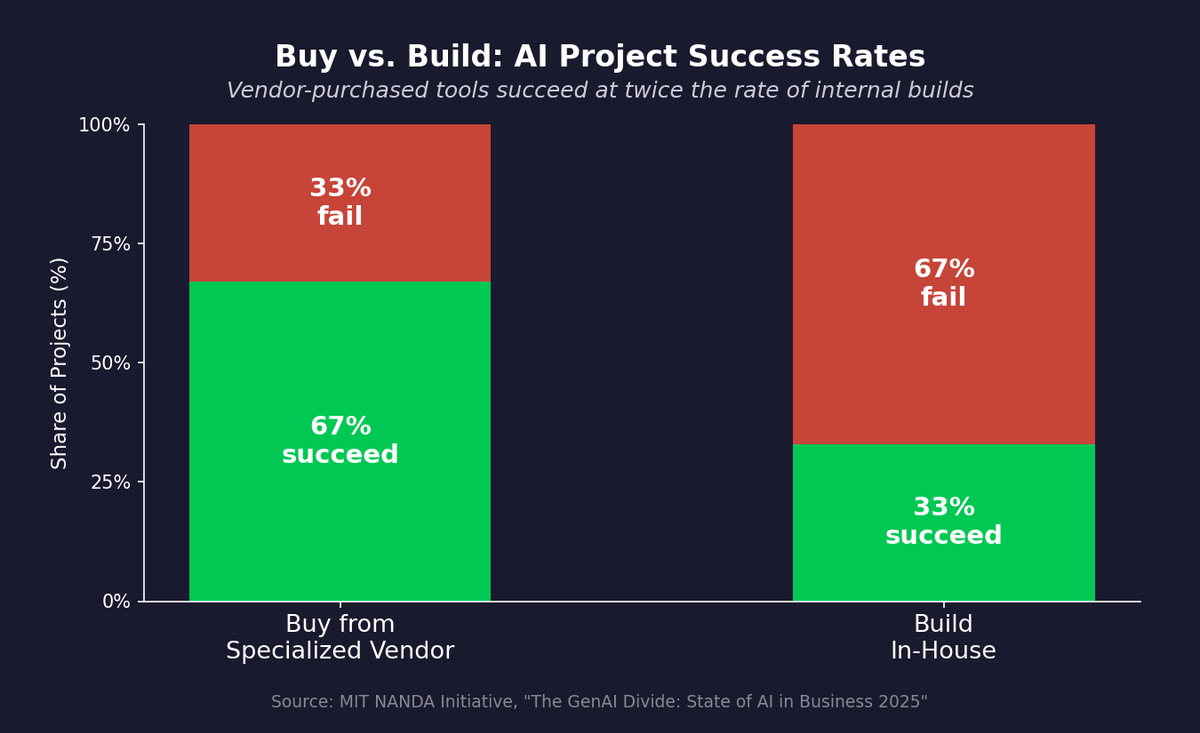

MIT's study found that purchasing AI tools from specialized vendors succeeds about 67% of the time. Internal builds succeed only one-third as often.1

This finding is consistent with what I've seen in practice. Building AI in-house requires a concentration of talent, infrastructure, and institutional patience that most mid-market companies do not have. The companies that succeed tend to buy proven tools, integrate them tightly into their workflows, and save their internal engineering resources for the integration and customization work that vendors can't do for them.

The instinct to build is strong, especially among technical founders and CTOs. But the data says buying is the higher-probability path for most organizations.

Buy vs. Build: vendor-purchased tools succeed roughly 67% of the time versus 33% for internal builds, according to the MIT NANDA Initiative 2025 study.

They Measure in Dollars, Not Demos

BCG's research shows that AI leaders achieve 1.5x higher revenue growth and 1.6x greater shareholder returns.2 But these leaders did not get there by measuring "adoption rates" or "user satisfaction scores."

They measure in financial terms. Hours saved at fully-loaded cost. Revenue generated per AI-assisted workflow. Cost avoided per automated process. Net of the total cost to build, deploy, and maintain the system.

"It's faster" is not ROI. "It saved 2,000 hours at $85 per hour, net of $60,000 in annual platform and maintenance costs" is ROI. If you can't express the result in dollars, you don't know if it's working.

The 95% that fail tend to measure activity: how many employees are using the tool, how many queries per day, how satisfied users report being. These metrics feel good in a quarterly review. They tell you nothing about whether the investment is paying for itself.

They Assign Owners, Not Committees

Successful AI deployments have a product manager. Failed ones have a steering committee.

The product manager owns the system the way a product manager owns a product: with explicit service-level commitments, a roadmap, a maintenance budget, and accountability for outcomes. They monitor for performance drift. They manage retraining cycles. They own version 1.1, and version 1.2, and every version after that.

The steering committee meets monthly, reviews a dashboard, asks for a status update, and moves on. Nobody owns the system after launch. Nobody monitors for degradation. Nobody retrains the model when the data changes. The pilot succeeds, the committee declares victory, and the system slowly rots.

They Limit Their Bets

Large enterprises run 30 or 40 pilots and expect most to fail. They find winners through volume.

Mid-market companies can't afford that approach. Omdia's 2025 survey found that 43% of firms under $100 million in revenue run fewer than 5 AI proofs of concept.5 When you only get a few shots, each one has to count.

The 5% who succeed at the mid-market level pick one or two bets. They scope them tightly. They attach each one to a specific financial outcome. And they set a kill switch: if the pilot isn't showing a measurable path to ROI within 90 days, they cut it and redirect the resources.

This discipline is harder than it sounds. The temptation to chase the next demo, the next vendor pitch, the next shiny use case is constant. The companies that resist it are the ones that ship.

From POC to Production: of 100 AI initiatives started, roughly 12 reach production. About 88 never leave the lab.

The Pattern

The 5% are not doing anything exotic. They are doing basic operational discipline applied to a new technology.

Start with the business problem, not the technology. Test for failure, not for success. Buy before you build. Measure in dollars. Assign an owner. Limit your bets.

Every one of these principles predates AI. They are the same principles that separate companies that succeed with any technology investment from the ones that waste money on pilots that never ship.

AI does not change the rules of good management. It raises the stakes.

Sources

- MIT NANDA Initiative, "The GenAI Divide: State of AI in Business 2025" (5% achieve rapid revenue acceleration; vendor purchases 67% success rate vs. 33% for internal builds). ↑

- BCG, "The Widening AI Value Gap," September 2025 (5% substantial value at scale; 60% hardly any material value; leaders: 1.5x revenue growth, 1.6x shareholder returns). ↑

- McKinsey, "The State of AI in 2025" (high performers nearly 3x as likely to fundamentally redesign workflows). ↑

- IDC/Lenovo, 2025 (4 of 33 POCs reach production). CIO ↑

- Omdia AI Market Maturity Survey, 2025 (43% of sub-$100M firms running fewer than 5 POCs). Omdia ↑

Continue Reading

74% Want Revenue from AI. 20% Are Getting It.

88% of companies have adopted AI. Fewer than 40% can point to a financial result.

Your AI Pilot Worked. That's the Worst Thing That Could Have Happened.

88% of AI proofs-of-concept never reach production. The problem isn't the technology.

Before You Let AI Act Alone, Ask This One Question

The question isn't whether AI is capable. It's what happens when it's wrong.

PE's Zombie Problem Isn't About Capital. It's About Operations.

The same operational discipline that separates AI winners from losers is what separates healthy PE portfolios from zombies.

Want to be in the 5%?

I help mid-market companies make the operational decisions that separate AI winners from the 95% that stall. Let's talk about where the math works for your business.

Let's Talk